by Admin | May 30, 2022 | Peoples, Executive Board, Global Enlightenment Leaders, Board of Directors

Yasuhide Nakayama was first elected as a member of the House of Representatives in 2003, and since then has been reelected to the Diet on five occasions as a representative for the ruling Liberal Democratic Party (LDP).

During that time he served as State Minister for Foreign Affairs (under Prime Minister Abe) and most recently as the State Minister of Defense/State Minister of Cabinet Office (under Prime Minister Suga). He has also occupied various key Diet posts, including Chair of the House of Representatives Committee on Foreign Affairs.

In November 2021, Nakayama was appointed Special Advisor for Foreign Affairs to the LDP (to the Chairperson of the Policy Research Council for Foreign Affairs, Defense and Game-Changing Fields).

by Admin | May 29, 2025 | News

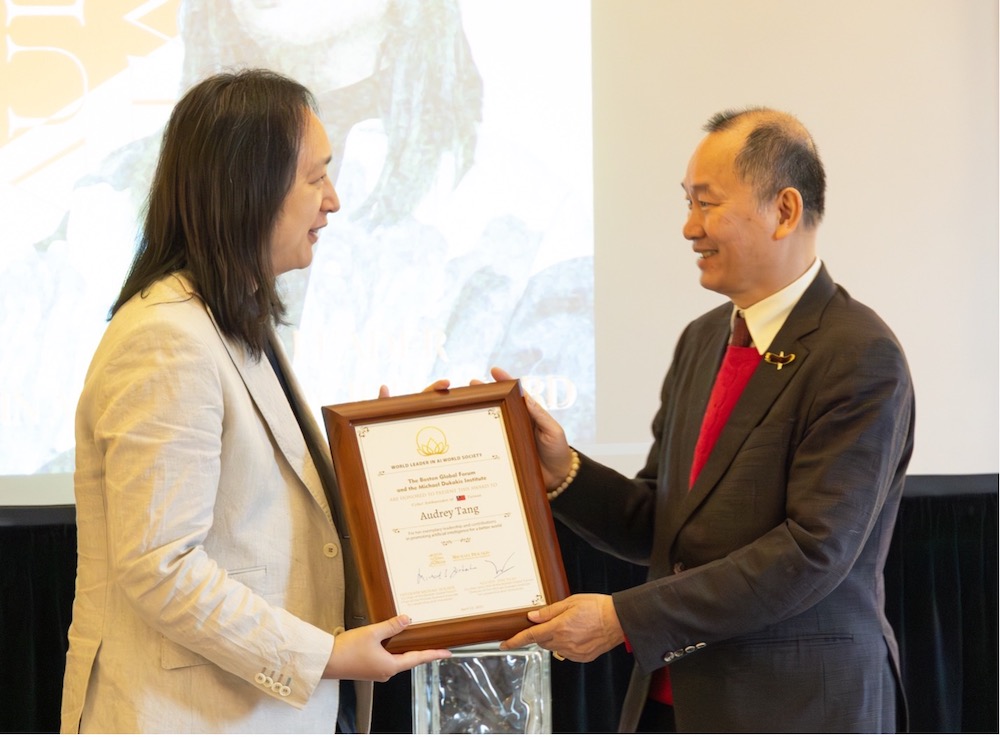

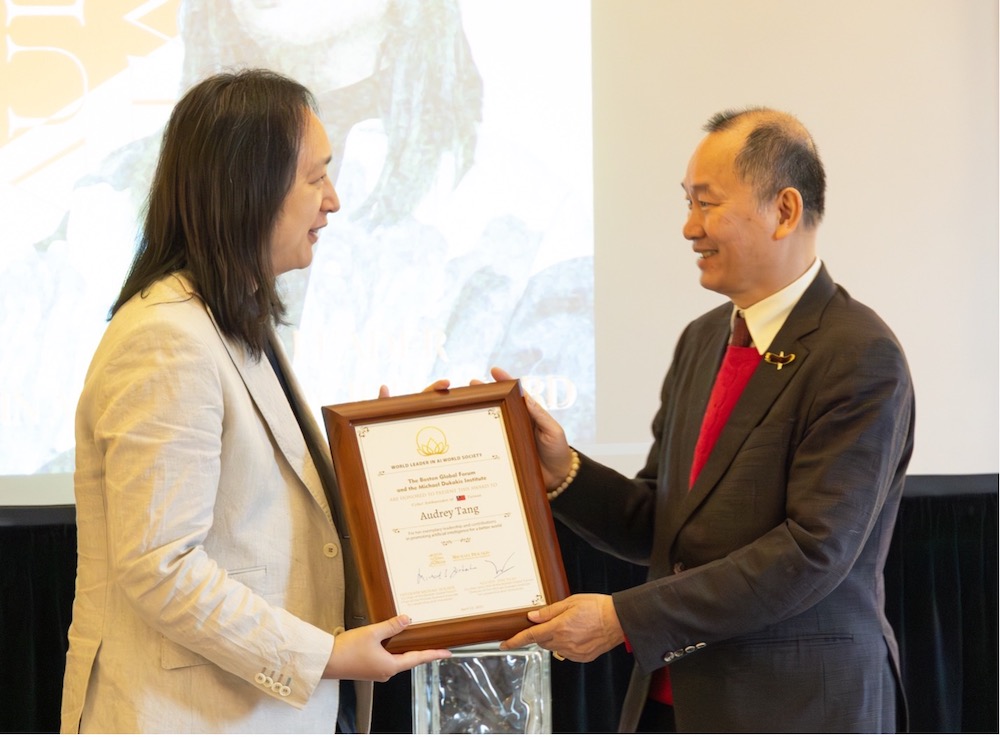

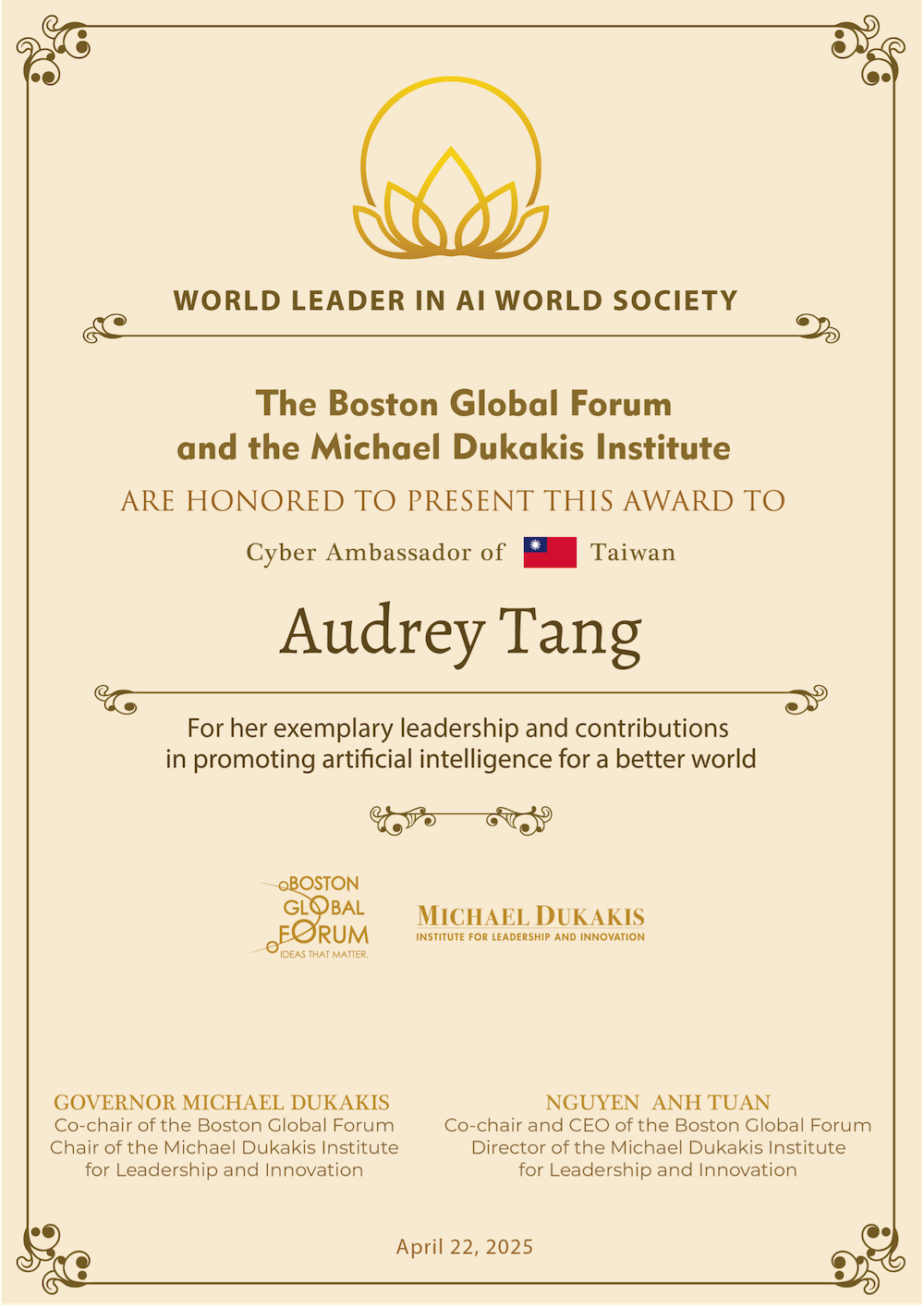

On April 22, 2025, at Harvard University’s historic Loeb House, the Boston Global Forum presented the World Leader in AI World Society (AIWS) Award to Taiwan’s Cyber Ambassador, Audrey Tang.

Governor Michael Dukakis, in his remarks, praised Audrey as “an extraordinary individual whose vision, courage, and brilliance have reshaped the landscape of governance and freedom in the AI Age.” From child prodigy to cabinet minister, from civic tech pioneer to international thought leader, Audrey has redefined what it means to lead in the age of artificial intelligence.

Audrey’s acceptance speech was a moving call to action for a future built on transparency, prosocial participation, and “plurality over polarization.” Reflecting on Taiwan’s digital democracy successes—from the g0v movement and vTaiwan platform to real-time citizen engagement—Audrey reminded us that technology must amplify collective wisdom, not divide it. As she put it: “Sunlight is an immunization against manipulation.”

She closed with a poem—a vision of the future where machines enhance, rather than replace, human values:

“When we see ‘internet of things,’ let’s make it an internet of beings… When we hear ‘the singularity is near,’ let us remember: the Plurality is here.”

Audrey’s work is not just technical leadership—it is moral leadership. As the global community navigates the challenges of AI governance, Audrey’s example lights the way forward.

Remarks by Governor Michael Dukakis Honoring Audrey Tang

Boston Global Forum Conference

Boston Finance Accord for AI Governance 24/7

Harvard University Loeb House, 17 Quincy Street, Cambridge, MA

April 22, 2025

Ladies and gentlemen, it is my profound privilege to honor an extraordinary individual whose vision, courage, and brilliance have reshaped the landscape of governance and freedom in the AI Age—Audrey Tang, Taiwan’s Cyber Ambassador, the world’s first nonbinary cabinet official, and the Boston Global Forum’s 2025 World Leader in AIWS Award recipient.

Audrey, your journey is nothing short of inspiring. A child prodigy mastering mathematics at six and programming by eight, you were a Silicon Valley innovator by nineteen. Yet, it is your fusion of technical genius with a deep commitment to pluralism and democracy—nurtured in a pro-democracy family—that truly sets you apart. You saw the internet not just as code, but as a bridge to unite people through shared dreams, a vision you’ve carried from Taiwan to the world.

Your impact is transformative. Through Taiwan’s g0v civic tech community, you pioneered platforms like Join.gov.tw, empowering citizens to shape policies—from tax software to cancer treatment reforms—with unprecedented transparency. During the 2014 Sunflower Movement, your livestreaming of a controversial trade pact turned deliberation into a public act of courage, cementing Taiwan as Asia’s beacon of freedom. In the face of COVID-19, your ingenuity—think mask maps and fact-checking tools—showed the world how technology can serve humanity with clarity and compassion.

As a “conservative anarchist,” you’ve redefined governance, blending radical openness with practical wisdom. Your leadership in Taiwan’s Ministry of Digital Affairs and now as Cyber Ambassador has made collective decision-making a reality, inspiring the AI World Society’s vision of Government 24/7. Just last month, at the 4th Shinzo Abe Conference in Tokyo, your insights enriched our Boston Finance Accord—a testament to your global influence, recognized by TIME Magazine’s 2023 Top 100 AI Influential People and today’s AIWS Award.

Audrey, you’ve said you aim to be a “good enough ancestor for future generations.” To us, you are already a guiding light, honoring Shinzo Abe’s legacy of innovation and cooperation while forging a path toward global enlightenment. On behalf of the Boston Global Forum, I salute you for your unwavering dedication to transparency, inclusion, and a better tomorrow. Thank you, Audrey Tang, for inspiring us all.

https://www.youtube.com/watch?v=ahczjdSgNjI

Acceptance Speech by Audrey Tang

Acceptance Speech by Audrey Tang

Cyber Ambassador of Taiwan

Recipient of the World Leader in AI World Society Award

Harvard University, Loeb House – 17 Quincy Street, Cambridge, MA

April 22, 2025 | 8:00 AM – 11:30 AM EDT

Governor Dukakis, Co-Chairs Mr. Tuan and Professor Patterson, distinguished guests—good morning.

Standing in Harvard’s Loeb House to receive the World Leader in AIWS Award, I feel honored — and compelled. Climate shocks, border crises, cyber-conflict: today’s threats travel at machine speed, yet their solutions still rely on the tempo of human trust. Our task is to keep that trust synchronized—twenty-four hours a day, seven days a week.

People often ask what a “Cyber Ambassador” does. I answer: I help societies steer through transformative technologies. The word cyber comes from the Greek kybernetes, “to steer,” and it was Norbert Wiener who gave “cybernetics” its modern meaning here in Boston.

Today’s Boston Finance Accord for AI Governance 24/7 shows how purposeful steering can turn artificial intelligence into assistive intelligence, technology that amplifies our collective wisdom and compassion.

Why does this matter? Consider five sets of figures from Taiwan’s past decade of digital democracy:

- First, over 20 000 participants in the g0v civic-tech network have “forked” government websites into open-source versions that citizens actually prefer.

- Second, 500 000 citizens joined the 2014 Sunflower rallies, livestreamed by those same volunteers, pushing the government to adopt radical transparency as our national ethos.

- Third, The gov.tw platform—visited by 10 000 people every day—has given everyone in Taiwan a direct voice in policy, and 80 % of issues deliberated on vTaiwan have led to decisive action.

- Fourth, During COVID-19, an open-data “mask map” went live in 48 hours; Taiwan avoided lockdowns entirely, yet our economy grew by more than 12 % during the three years of pandemic.

- Last but not the least, today 91 % of Taiwanese say democracy is “fairly good”, our 15 years olds top the world in terms of civic knowledge, and and we rank among the least socially polarized societies globally.

And because no democracy is an island—not even Taiwan—those civic muscles must expand across oceans, cultures, and time zones. When one society’s guardrails wobble, tremors ripple everywhere.

I accept this award on behalf of communities that made those numbers possible. Their lesson is simple: sunlight is an immunization against manipulation. That’s the lesson of every number I just shared.

by Admin | Jan 26, 2025 | News

Saigon International University (SIU): A Historic Milestone for AIWS Government 24/7

January 11, 2025 | Ho Chi Minh City, Vietnam

At the SIU Prize Computer Science Award Ceremony and the SIU Shaping Futures International Conference titled “AI for a Better World,” AIWS Government 24/7 reached a significant milestone with the following joint statement:

“Ho Chi Minh City, January 11, 2025

Saigon International University (SIU)

Today, at The Saigon International University (SIU) Prize Computer Science Award Ceremony and the international conference SIU Shaping Futures on ‘AI for a Better World,’ SIU Prize Laureates, SIU Prize Candidates, speakers, scientists, and participants join SIU in calling on governments, the business community, and academic institutions worldwide to collaborate and work toward realizing the AIWS Government 24/7 and Boston Areti AI (BAI) model.

Shaping Futures on ‘AI for a Better World’

SIU’s commitment to pioneering research, inclusive education, and ethical AI practices underscores our dedication to nurturing the next generation of global innovators. By building the AIWS Government 24/7 framework and implementing BAI, we believe nations can deliver more responsive public services, ensure transparency, and ultimately create a better world through responsible AI integration.”

For more details, visit:

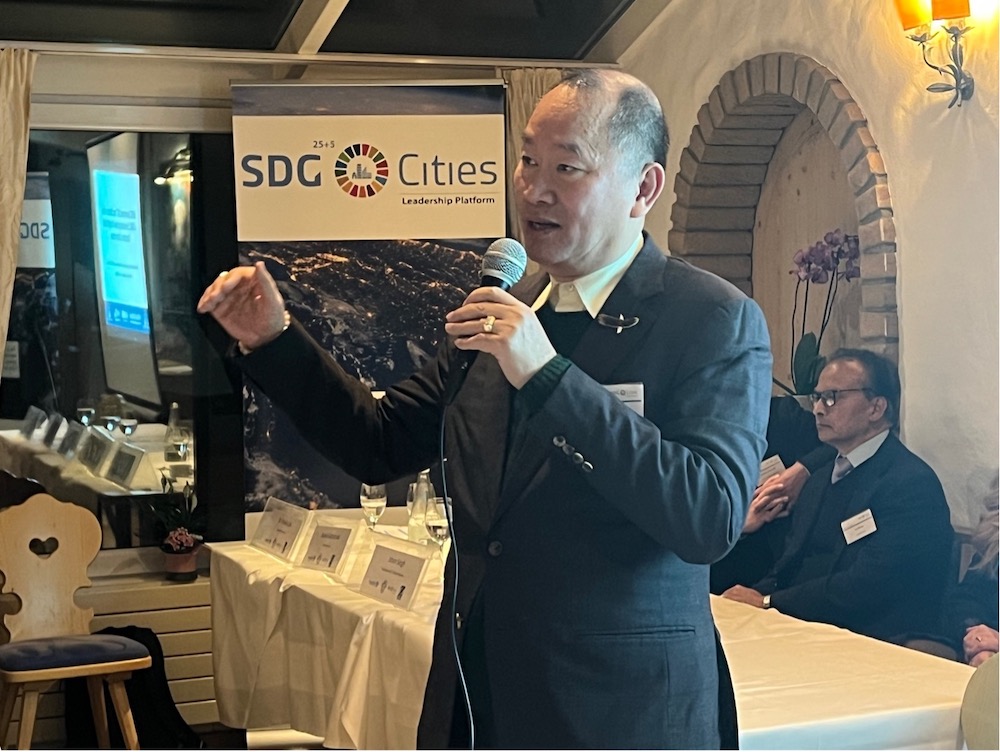

AIWS Government 24/7 Introduced at SDG LAB 2025 Davos: “SDG10 – Nobody Left Behind”

January 19-23, 2025 | Victoria Hotel, Davos

At SDG Lab 2025 in Davos, Nguyen Anh Tuan, Co-founder, Co-chair, and CEO of the Boston Global Forum, presented the groundbreaking concept of AIWS Government 24/7 during the session titled “AI Champions for the Common Good.” His innovative vision highlighted the transformative role of AI in achieving Sustainable Development Goals (SDGs), particularly in fostering a world where no one is left behind.

The SDG Lab, established in Davos in 2010, has become a unique platform for thought leaders committed to advancing sustainability and resilience. This year’s theme, “SDG10 – Nobody Left Behind,” underscores the importance of reducing inequalities and promoting inclusivity.

During this event, Nguyen Anh Tuan was honored with the UNGSII Award in recognition of his outstanding leadership and contributions to advancing SDGs. This accolade further cements his status as a global leader in leveraging AI for the common good and sustainability.

by Admin | Jul 14, 2024 | News

This is a transcript from a segment of the NPR show Weekend Edition Sunday.

A new lawsuit filed by record labels Universal, Sony and Warner says their catalogs have been ripped off by two AI music generators. But there’s a twist: It’s not clear the courts are on their side.

AYESHA RASCOE, HOST: The music industry is coming for artificial intelligence. A new lawsuit filed by the Big 3 record labels – Universal, Sony, and Warner – says their catalogs have been ripped off by two AI music generators. But there’s a twist. It’s not clear that the courts are on the side of record labels. NPR’s Bobby Allyn explains why.

BOBBY ALLYN, BYLINE: The two companies are Suno and Udio. Here’s how they work. Type a description into a search box, and seconds later, you get an AI song. So if you type 70s pop into the search, you might get a song it calls “Prancing Queen.”

ALLYN: Sure does sound a whole lot like Swedish pop group ABBA’s 1976 hit “Dancing Queen,” doesn’t it?

ALLYN: Hmm, artistic inspiration? Mitch Glazier doesn’t think so. He says this is wholesale theft. He’s the CEO of the Recording Industry Association of America. Along with major record labels, he’s suing to try to put a stop to these services.

MITCH GLAZIER: This case is a basic copyright case. It’s about two companies – Suno and Udio took music made by other people without permission, and without compensation, and make money.

ALLYN: The AI companies wouldn’t make anyone available for an interview. But in statements, they said the tools are not memorizing and regurgitating music. They say, OK, the music might reflect some ideas or themes similar to, say, ABBA, but it’s something new entirely. The case is about two sides focusing on different things. The music industry says, focus on the music that was copied, the input. And the AI companies say, focus on the new thing the tools are spitting out, the output.

RICHARD BUSCH: It’s not a clear-cut case.

ALLYN: That’s Richard Busch. He’s a big deal in the copyright world. He’s a lawyer who won a multimillion dollar case against Robin Thicke and Pharrell Williams over their 2013 song “Blurred Lines” for illegally copying Marvin Gaye. He’s devoted his life to going after artists who have ripped others off. But he thinks the AI companies here might actually have an argument.

BUSCH: How is this different than a human brain listening to music and then creating something that is not infringing, but is influenced.

ALLYN: Busch says both sides of the copyright debate, the input and the output, will be important when hashing this out. I ask Glazier with the recording industry to point to an AI song that best sums up the case. And he said Jason Derulo, who is known in the R&B world for his so-called tags at the beginning of songs. He gives his own name a shoutout – like this.

ALLYN: The AI tools ban searches that specifically name artists. But if you type in contemporary R&B, male singer, soaring ballads, catchy dance pop, you hear – well…

BUSCH: You can hear Jason Derulo’s tag in the output. So pretty convincing proof that the company actually did copy the song without permission.

ALLYN: And Glazier with the recording industry says there are many hundreds of other examples. The suit asks for up to $150,000 per infringed song, so if they win, it could add up fast. The recording industry has something of a playbook for this kind of case. It help bring down music file-sharing service Napster. Nearly 25 years later, AI is the new enemy. Busch, the music copyright lawyer, says, he thinks with time, both sides will reach some kind of deal before this goes to trial.

GLAZIER: Every single time there’s new technology, this is what happens. Everyone gets up in arms. Everyone says this is the end of the world, and then everyone says, wait a second. There’s a way for everybody to make money.

ALLYN: Take streaming services like Spotify. The record industry once resisted it. Now, streaming music has pushed music industry profits to new highs. Bobby Allyn, NPR News.

by Admin | Jul 14, 2024 | News

At the launching ceremony of the Youth Innovation and Entrepreneurship Club in Nha Trang City on June 26, 2024, Japanese State Minister Yasuhide Nakayama, BGF Chief in Japan, unveiled an ambitious proposal known as the Nha Trang Heart Initiative. This initiative aims to foster closer ties between the residents of Nha Trang City and key cities in Japan, particularly Tokyo and Osaka, by promoting collaboration in technology, education, business, tourism, and culture. Emphasizing a blend of Japanese values such as ethics, humility, responsibility, reliability, fairness, and kindness with Vietnamese qualities of openness, dynamism, adaptability, friendliness, and warmth, the initiative seeks to create symbolic characters in the Age of AI within the AI World Society framework. This initiative is a part of the Shinzo Abe Initiative for Peace and Security, reflecting Japan’s commitment to cooperation and mutual understanding, from regional issues of the Indo-Pacific to global challenges.

Acceptance Speech by Audrey Tang

Acceptance Speech by Audrey Tang