by Editor | Aug 25, 2019 | Statements, News

Japan and the US have expressed alarm after South Korea formally withdrew from an intelligence-sharing deal with Tokyo.

Analysts said the move could jeopardise efforts to track and curtail North Korea’s nuclear weapons programme.

Senior officials exchanged angry statements on Friday amid what experts are calling the worst crisis in Japan-South Korea relations in decades, with prime minister Shinzo Abe saying the decision had “damaged mutual trust”.

Mr. Abe, before leaving to join the G7 summit in France, said that Tokyo “will continue to closely coordinate with the US to ensure regional peace and prosperity, as well as Japan’s security”.

“The situation is escalating, and it’s hard to see how the spiralling conflict can be stopped,” said Koichi Ishizaka, an expert on intercultural communication and a professor at Rikkyo University in Tokyo.

Mr. Ishizaka said Mr. Abe probably also feels like he can score domestic political points by taking a hard stance on South Korea, despite the close cultural ties between the two countries.

“Although cordial exchange between the people is working for a brighter future, politics has taken a step back and has not caught up with that,” he said.

The original article can be found here.

The Boston Global Forum honored Prime Minister Shinzo Abe with the World Leader for Peace and Cybersecurity Award on Global Cybersecurity Day, December 12, 2015, at Harvard University Faculty Club.

by Editor | Aug 25, 2019 | News

We live in times of high-tech euphoria marked by instances of geopolitical doom-and-gloom. There seems to be no middle ground between the hype surrounding cutting-edge technologies, such as Artificial Intelligence (AI) and their impact on security and defence, and anxieties over their potential destructive consequences. AI, arguably one of the most important and divisive inventions in human history, is now being glorified as the strategic enabler of the 21st century and next domain of military disruption and geopolitical competition. The race in technological innovation, justified by significant economic and security benefits, is widely recognised as likely to make early adopters the next global leaders.

Technological innovation and defence technologies have always occupied central positions in national defence strategies. This emphasis on techno-solutionism in military affairs is nothing new. Unsurprisingly, Artificial Intelligence is often discussed as a potentially disruptive weapon and likened to prior transformative technologies such as nuclear and cyber, placed in the context of national security. However, this definition is problematic and sets the AI’s parameters as being one-dimensional. In reality, AI has broad and dual-use applications, more appropriately comparable to enabling technologies such as electricity, or the combustion engine.

Growing competition in deep-tech fields such as AI are undoubtedly affecting the global race for military superiority. Leading players such as the US and China are investing heavily in AI research, accelerating use in defence. In Russia, the US, and China, political and strategic debate over AI revolutionising strategic calculations, military structures, and warfare is now commonplace. In Europe, however, less attention is being paid to the weaponisation of AI and its military application. Despite this, the European Union’s (EU) European Defence Fund (EDF) has nonetheless earmarked between 4% and 8% of its 2021-2027 budget to address disruptive defence technologies and high-risk innovation. The expectation being that such investment would boost Europe’s long-term technological leadership and defence autonomy.

In 2018, Michael Dukakis Institute for Leadership and Innovation (MDI) established the Artificial Intelligence World Society (AIWS) to collaborate with governments, think tanks, universities, non-profits, firms, and other entities that share its commitment to the constructive and development of AI for helping everyone achieve well-being and happiness as well as ethical norms. This effort will be a guidance on AI development to serve and strengthen democracy, human rights, and the rule of law for a better world society.

by Editor | Sep 15, 2019 | News

The Boston Global Forum and Michael Dukakis Institute for Leadership and Innovation will organize the AI World Society Conference on 1:30 pm, September 23, 2019 at Harvard University Faculty Club, to introduce and discuss the Social Contract 2020 and rules and laws for AI and the Internet.

The keynote speaker is Professor Alex Sandy Pentland, who directs the MIT Connection Science and Human Dynamics labs and had previously helped create and direct the MIT Media Lab and the Media Lab Asia in India. He is one of the most-cited scientists in the world and Forbes recently declared him one of the “7 most powerful data scientists in the world”, along with the Google founders and the Chief Technical Officer of the United States; he also co-led the World Economic Forum discussion in Davos that led to the EU privacy regulation GDPR and was central in forging the transparency and accountability mechanisms in the UN’s Sustainable Development Goals. He has received numerous awards and prizes, such as the McKinsey Award from Harvard Business Review, the 40th Anniversary of the Internet from DARPA, and the Brandeis Award for work in privacy.

Professor Pentland is a co-founder of the Social Contract 2020 and a member of the Board of Thinkers of the Boston Global Forum.

Following the speech of Professor Pentland, Professor Christo Wilson, Northeastern University, Harvard Law School Fellow, and Michael Dukakis Leadership Fellow, will present solutions for AI transparency.

Then, Paul F. Nemitz and Michel Servoz will present Rules and International Laws of AI World Society.

Paul F. Nemitz is the Principal Advisor in the Directorate General for Justice and Consumers. He was appointed by the European Commission on 12 April 2017, following a 6-year appointment as Director for Fundamental Rights and Citizen’s Rights in the same Directorate General. As Director, Nemitz led the reform of Data Protection legislation in the EU, the negotiations of the EU – US Privacy Shield, and the negotiations with major US Internet Companies of the EU Code of Conduct against incitement to violence and hate speech on the Internet. He also is a team member of The Social Contract 2020.

Mr. Michael Servoz is the Special Adviser to the President of European Commission, Senior Adviser for “Robotics, Artificial Intelligence and the Future of European Labour Law” – European Political Strategy Centre (EPSC).

Scholars of Harvard, MIT, Tufts will join the AI World Society Conference as participants.

Download Agenda for this conference here

by Editor | Aug 25, 2019 | News

After news emerged that a multi-disciplinary team led by the University of Surrey successfully filed the first ever patent applications for inventions autonomously created by AI without a human inventor, Ian Bolland caught up with team leader Professor Ryan Abbott about the potential knock-on effect for life sciences.

Professor Abbott began by explaining the potential effects the filing will have on life sciences and other industries.

“These filings are important to any area of research and development as well as any area that relies on patents. Patents are more important in the life sciences than in many other areas, particularly for drug discovery. AI has also been used extensively in the drug discovery process for a long time for tasks like screening of compounds and in silico analysis. These tasks can be the foundation for patent filings.

“As AI is becoming increasingly sophisticated, it is likely to play an increasing role in R&D including in the life sciences. It is an exciting prospect that AI may be able to improve the efficiency of some historically very inefficient practices. Pharma and tech companies are likely to develop AI to automate more and more of the drug discovery process.”

Abbott believes that AI is going to become more autonomous because of its current trends, and its continuing and progressive involvement in R&D. According to Michael Dukakis Institute for Leadership and Innovation (MDI), AI can be an important tool to relieve people of resource constraints and arbitrary/inflexible rules in R&D, but AI algorithms should follow ethical principles that promote fairness and avoid unjust effects on people.

The original article can be found here.

by Editor | Aug 25, 2019 | News

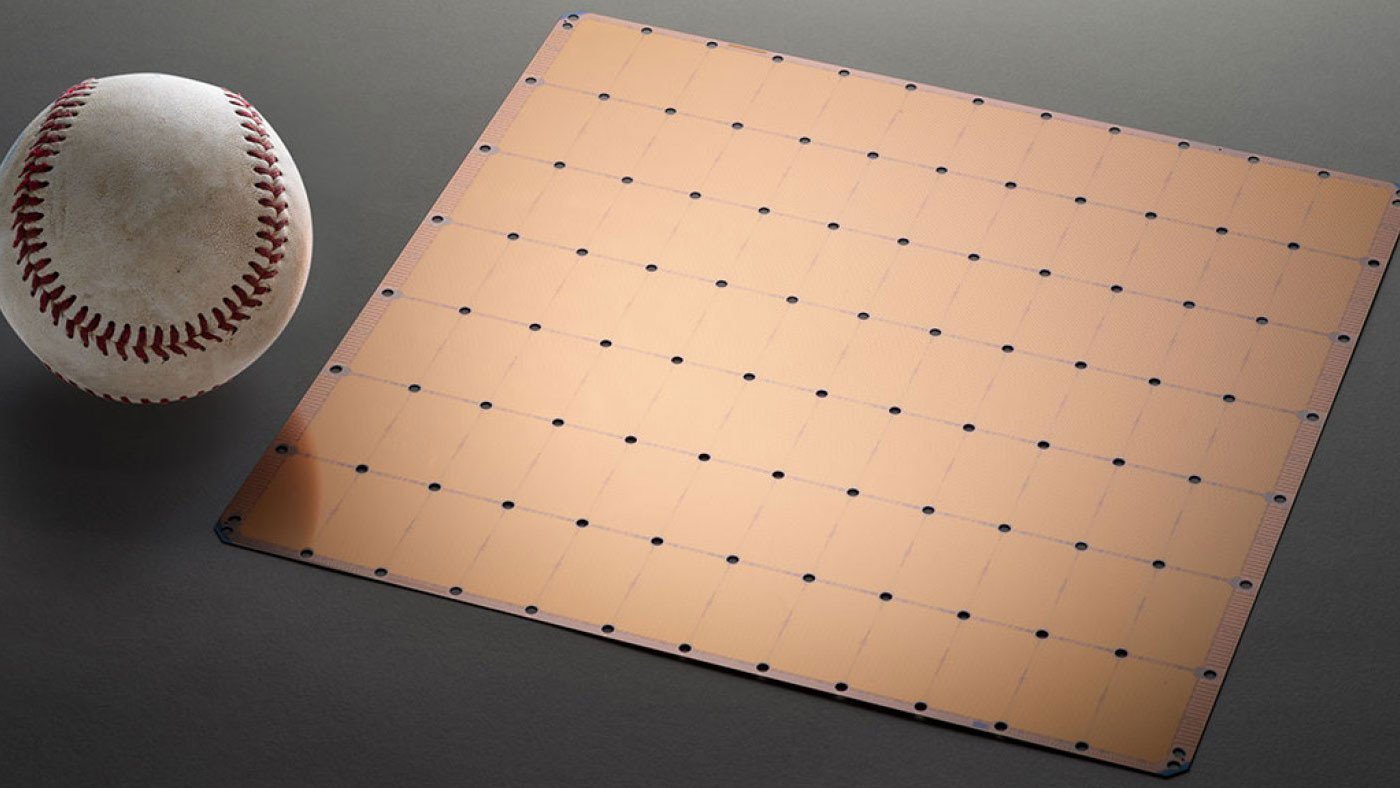

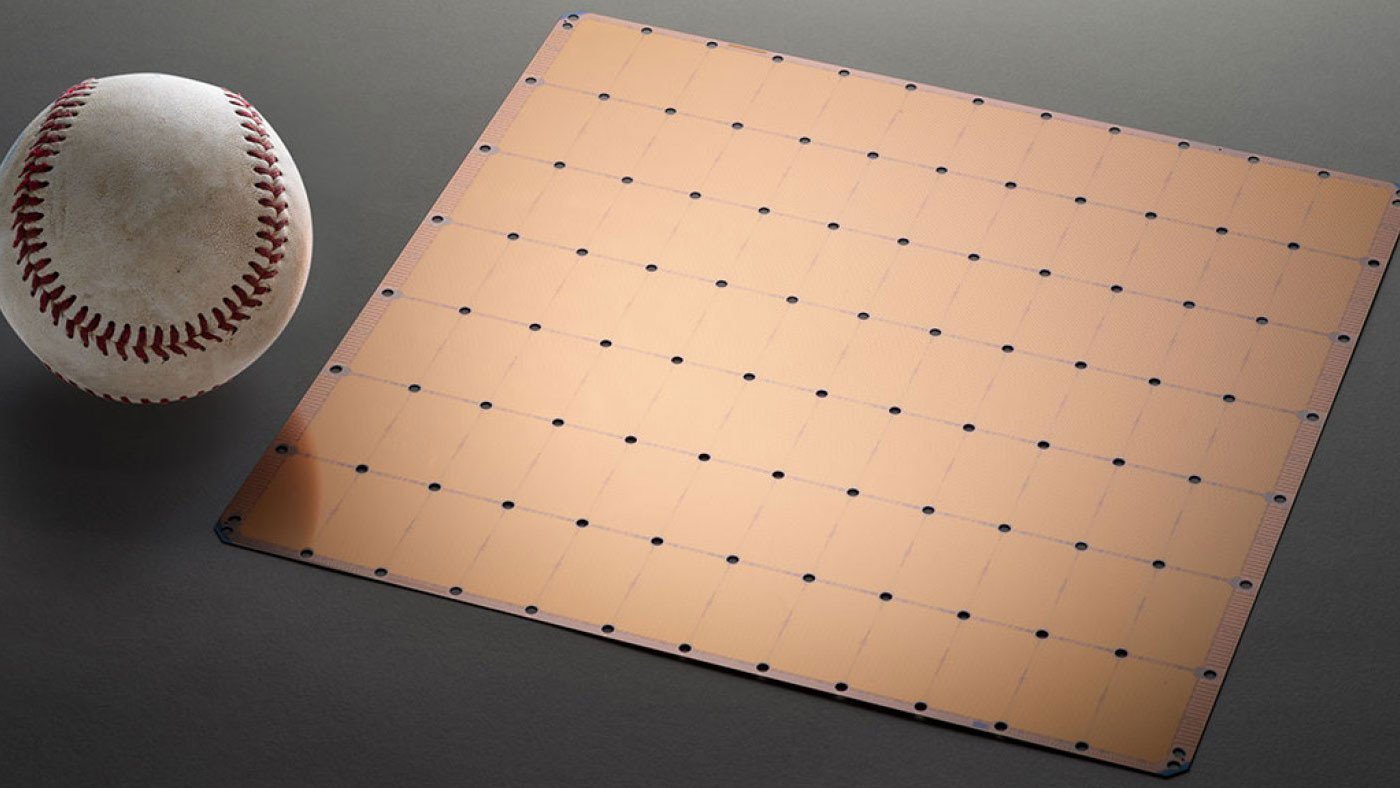

At the Hot Chips conference in Silicon Valley this week, Cerebras is unveiling the Cerebras Wafer Scale Engine. The chip is almost 57 times bigger than Nvidia’s largest general processing unit, or GPU, and boasts 3,000 times the on-chip memory. GPUs are silicon workhorses that power many of today’s AI applications, crunching the data needed to train AI models.

According to Cerebras, is that hooking lots of small chips together creates latencies that slow down training of AI models—a huge industry bottleneck. The company’s chip boasts 400,000 cores, or parts that handle processing, tightly linked to one another to speed up data-crunching. It can also shift data between processing and memory incredibly fast.

If this monster chip is going to conquer the AI world, it will have to show it can overcome some big hurdles. One of these is in manufacturing. If impurities sneak into a wafer being used to make lots of tiny chips, some of these may not be affected; but if there’s only one mega-chip on a wafer, the entire thing may have to be tossed. Cerebras claims it’s found innovative ways to ensure that impurities won’t jeopardize an entire chip, but we don’t yet know if these will work in mass production.

Another challenge is energy efficiency. AI chips are notoriously power hungry, which has both economic and environmental implications. Shifting data between lots of tiny AI chips is a huge power suck, so Cerebras should have an advantage here. If it can help crack this energy challenge, then the startup’s chip could prove that for AI, big silicon really is beautiful.

The original article can be found here.