by Editor | Apr 26, 2020 | News

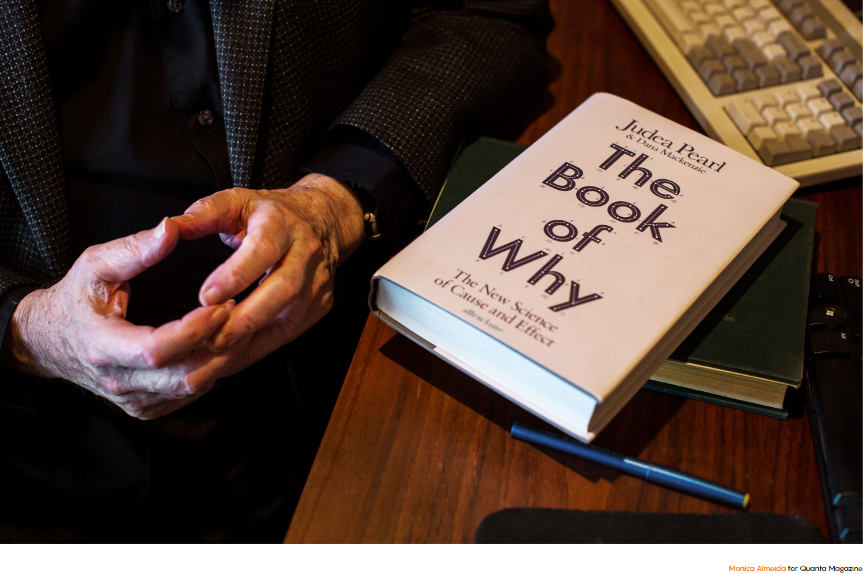

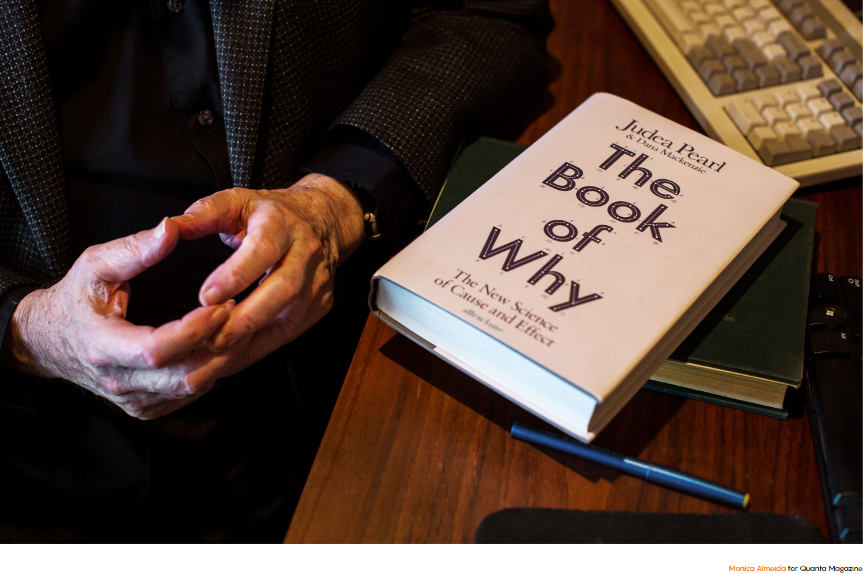

Artificial intelligence owes a lot of its smarts to Judea Pearl. In the 1980s he led efforts that allowed machines to reason probabilistically. Now he’s one of the field’s sharpest critics. In his latest book, “The Book of Why: The New Science of Cause and Effect,” he argues that artificial intelligence has been handicapped by an incomplete understanding of what intelligence really is.

Three decades ago, a prime challenge in artificial intelligence research was to program machines to associate a potential cause to a set of observable conditions. Pearl figured out how to do that using a scheme called Bayesian networks. Bayesian networks made it practical for machines to say that, given a patient who returned from Africa with a fever and body aches, the most likely explanation was malaria. In 2011 Pearl won the Turing Award, computer science’s highest honor, in large part for this work.

But as Pearl sees it, the field of AI got mired in probabilistic associations. These days, headlines tout the latest breakthroughs in machine learning and neural networks. We read about computers that can master ancient games and drive cars. Pearl is underwhelmed. As he sees it, the state of the art in artificial intelligence today is merely a souped-up version of what machines could already do a generation ago: find hidden regularities in a large set of data. “All the impressive achievements of deep learning amount to just curve fitting,” he said recently.

In his new book, Pearl, now 81, elaborates a vision for how truly intelligent machines would think. The key, he argues, is to replace reasoning by association with causal reasoning. Instead of the mere ability to correlate fever and malaria, machines need the capacity to reason that malaria causes fever. Once this kind of causal framework is in place, it becomes possible for machines to ask counterfactual questions — to inquire how the causal relationships would change given some kind of intervention — which Pearl views as the cornerstone of scientific thought. Pearl also proposes a formal language in which to make this kind of thinking possible — a 21st-century version of the Bayesian framework that allowed machines to think probabilistically.

Pearl expects that causal reasoning could provide machines with human-level intelligence. They’d be able to communicate with humans more effectively and even, he explains, achieve status as moral entities with a capacity for free will — and for evil.

The original article can be found here.

In the support of AI Ethics, Michael Dukakis Institute for Leadership and Innovation (MDI) established the Artificial Intelligence World Society (AIWS.net) for the purpose of promoting ethical norms and practices in the development and use of AI. Besides, Michael Dukakis Institute and the Boston Global Forum honored Professor Judea Pearl as 2020 World Leader in AI World Society for his contribution on AI Causality. In the future, Professor Judea will also contribute to Causal Inference for AI transparency, which is one of important AIWS topics on AI Ethics.

by Editor | Apr 19, 2020 | Event Updates

At 9:00 am (Boston time), April 22, 2020, Professor Alex Pentland, will speak at online AIWS Roundtable: Solutions to reopen in democratic countries

Professor Alex Pentland is the director of MIT Connection Science and co-founder of AIWS.net

Moderator: Dr. Lyndon Haviland, Adviser of United Nations Secretary General

Participants: senior business leaders of global corporation who are alumni of Harvard Business School at AMP program.

Professor Alex Pentland is one of 7 most powerful data scientists according to Forbes Magazine ranking. He will present using personal data to prevent Coronavirus without violate privacy rights and can reopen economy and society.

by Editor | Apr 19, 2020 | News

The world’s current growing pandemic of the Wuhan virus amplifies the paramount and ultimate goal of our AI initiative. Our vision is to establish a universal AI value system ensuring that AI technology advancement would make lives better and not give ruling authority more tools to oppress or exacerbate human suffering.

The number one of our AI Universal World Guidelines states:

“Right to Transparency: All individuals have the right to know the basis of an AI decision that concerns them. This includes access to the factors, the logic, and techniques that produces the outcome.”

While our world is still preoccupied with fighting the Wuhan virus, it has been so clear to all that had the ruling power of China been more fort coming with it people and with the world, this pandemic could have been avoided or its killing impact could have been much more limited. As early as November of 2019 in Wuhan, China there had been many red flags about this new killing disease; doctors had ringed the alarm bell. Unfortunately to all of us, the Chinese government not only kept these warnings secret, but also punished the doctors and suppressed any truthful voice of its people. In Asian culture, there is a saying that “treating an illness is similar to fight a fire”. Time is the essence. Any delay will cause irreparable harm.

During the most critical period of November and December of 2019, China has repeatedly announced that there was nothing to worry about and that it has everything under controlled while Chinese were dying in the thousands. And furthermore, the World Health Organization just repeated the official version of the Chinese government. This lack of transparency from a national government and from an international organization no doubt has contributed terribly to the avoidable suffering of millions of people across our Globe.

Every government has a different set of values. The more common values we have the better. We hope that in the near future, at least in the AI World Society, we all share basic universal principles, of which transparency is valued, respected, and commonly practiced.

by Editor | Apr 19, 2020 | News

Professor John Quelch, co-founder of the Boston Global Forum, Vice Provost, University of Miami, Dean, Miami Herbert Business School and Leonard M. Miller University Professor.

Recently, Professor Quelch has contributed a video to advise people preventing Coronavirus with 7Cs.

by Editor | Apr 19, 2020 | News

Most C-suite executives know they need to integrate AI capabilities to stay competitive, but too many of them fail to move beyond the proof of concept stage. They get stuck focusing on the wrong details or building a model to prove a point rather than solve a problem. That’s concerning because, according to our research, three out of four executives believe that if they don’t scale AI in the next five years, they risk going out of business entirely. To fix this, we offer a radical solution: Kill the proof of concept. Go right to scale.

We came to this solution after surveying 1,500 C-suite executives across 16 industries in 12 countries. We discovered that while 84% know they need to scale AI across their businesses to achieve their strategic growth objectives, only 16% of them have actually moved beyond experimenting with AI. The companies in our research that were successfully implementing full-scale AI had all done one thing: they had abandoned proof of concepts.

To scale value in the AI era, the key is to think big and start small: prioritize advanced analytics, governance, ethics, and talent from jump. It also demands planning. Decide what value looks like for you, now and three years from now. Don’t sacrifice your future relevance by being so focused on delivering for today that you aren’t prepared for the next wave. Understand how AI is changing your industry and the world, and have a plan to capitalize on it.

This is certainly new territory, but there is still time to get ahead if you lay the groundwork now. What you don’t have time to do is waste it proving a concept that already exists as consensus.

The original article can be found here.

According to Artificial Intelligence World Society Innovation Network (AIWS.net), AI can be an important tool to serve and strengthen democracy, human rights, and the rule of law. In this effort, Michael Dukakis Institute for Leadership and Innovation (MDI) invites participation and collaboration with think tanks, universities, non-profits, firms, and other entities that share its commitment to the constructive and development of full-scale AI for world society.