by BGF | Feb 10, 2019 | News

Japanese Prime Minister Shinzo Abe and German Chancellor Angela Merkel exchanged views on promoting bilateral security and economic cooperation in the recent meeting.

In a press conference held after talks with Abe, Merkel made clear her support for realizing a “free and open Indo-Pacific”. According to her, the scheme also concerns the territorial ambitions of China. Japan also strengthens its relationship with Germany to share awareness of China.

In past years, Germany is thought to have valued relations with China. This time, the German Chancellor only visited Japan. This shows that the country is maintaining a suitable distance with China.

On the other hand, Abe and Merkel confirmed that the two countries would strengthen a joint study on artificial intelligence (AI) and self-driving cars. Both Japan and Germany are concerned about China increasing its ability to dominate the market in the IT field.

Abe and Merkel attended a meeting of business leaders from both countries after the summit talks on Monday. In the era of 5G – the next generation mobile network technology, Merkel raises questions of preventing the Chinese administration from collecting and exploiting huge amounts of data.

In 2015, Boston Global Forum has named Germany’s Chancellor Angela Merkel as the recipients of the World Leader for Peace, Security and Development Award. Japan’s Prime Minister Shinzo Abe was named as a recipient of The World Leader in Cybersecurity Award, which is granted to an individual whose outstanding contributions have led to the advancement of cybersecurity by Boston Global Forum, in the same year.

by BGF | Feb 4, 2019 | News

Dissimilar to most incredible mechanical advances, the coming of Artificial Intelligence (AI) offers extraordinary admonitions.

One of the world’s leading physicists, Stephen Hawking, has dimly seen that AI can undoubtedly conquer individuals and turn into another type of life. During a meeting with the magazine Wired, he said that he expected that the AI could totally supplant individuals. On the off chance that individuals structure PC infections, somebody will plan AI to enhance and increase.

The father of present-day processing, Alan Turing, has stated that if people can’t recognize responses from machines and individuals, the machine can do so. The start of the AI began in the mid-1950s at Dartmouth College and it astonished the world by taking care of straightforward variable-based math issues and coherent hypotheses.

Decades later, the purported client master framework has shown up. These scaling contentions have duplicated in tackling complex issues. The advances were, for the most part, by Moore Law.

In 1997, Deep Blue, IBM’s chess PC, Deep Blue, defeated the dominant winner Gary Kasparov. A suitable model is the Deep Neural Network, a subcategory of the fake neural system, which decides the right numerical task to change over one contribution to yield.

Despite the fact that these advances are valuable in everything from prescription to automation, there is an unavoidable danger to AI that has as of late turned out to be clear, which are moral concerns must be checked.

The idea of the factor of artificial morality was presented by Wendell Wallach in his book “Moral Machines”, in which he asked whether programming planners ought to be constrained to creating programs that harm their countenances, moral or not.

Is there a reasonable limit between human mindfulness and feeling, (for example, sympathy) and their exact proliferation in a machine? Joseph Weizenbaum, one of AI’s fathers and MIT educator, was persuaded that the AI would never have the capacity to duplicate human attributes such as compassion or analysis.

In a turning point, Hans Moravec, a robot creator, and his associates anticipated that blending people and machines into cyborg would create a more intelligent “animal groups” – and progressively both can murder individuals.

Another area of concern is the effect of AI on work. In any case, there are the individuals who have discovered that mechanization frequently has a net increment in work because of the flighty downstream microcosm and macroeconomic productivity.

Unmistakably in the moral and specialized an unexplored area, in which alert and regard for the potential for unintended outcomes are vital. We can dare to dream – and supplicate – that AI will assume a functioning job in our lives, just as of who and what is to come.

Whether it is for better or worse, the future of AI will mainly lie on the hand of its developers – we, human to decide. Hence, a certain set of guidelines on ethics and standards is needed for developers to follow, not just what to make but also how to make it ethical. This is exactly what Michael Dukakis Institute for Leadership and Innovation (MDI) is attempting to do. So far, the organization has been working on developing the AIWS Index for governments and enterprises.

by BGF | Feb 4, 2019 | News

Both China and Russia are equipped for propelling cyberattacks that could cut down power systems or medical clinics, as indicated by the most recent annual US Worldwide Threat Assessment.

The 42-page report, assembled by the Senate Select Committee on Intelligence, refers to cyber attacks, online falsehood, and race impedance, as the best security concerns confronting the US today. It distinguishes China and Russia as the greatest wellsprings of potential assaults on US framework (such as the case of petroleum gas pipelines), with the capacity to cause interruption for quite a long time and even weeks. The audit said Russia could complete digital undercover work and dispatch impact battles like those led during the 2016 US presidential race, and that is “ending up increasingly skilled at utilizing web-based life to modify how we think, act, and choose.”

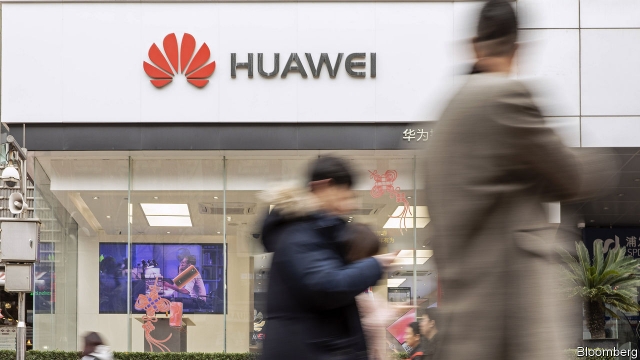

Unsurprisingly, the report recognizes China as America’s most dynamic rival with regards to digital secret activities. It believes Chinese IT firms are being utilized to keep an eye on the US, an official view that clarifies the ongoing treatment of Huawei by the US and certain partners.

The report says the innovation-related dangers it distinguishes will just increment as individuals coordinate billions of new computerized gadgets into their own lives and working environments. It cautions that the US’s general lead in science and innovation will keep on contracting. Also, it refers to a few rising advancements that could empower new dangers: AI, biotechnology, and 5G systems.

AI should be used for causes that will serve humanity. Toward this aim, MDI has built the AIWS Initiative to establish a society with the best and most effective AI application, bringing the best to humans.

by BGF | Feb 4, 2019 | News

Lately, AI systems are known that they may be unfair because their programs have identical prejudiced views widespread in society. An algorithm developed by engineers from MIT CSALL may eliminate the bias from AI.

The algorithm can identify and minimize any hidden biases by learning to understand a specific task such as the basic structure of the training data or face recognition. Following testing, the algorithm was able to reduce ‘categorical bias’ by over 60%, while performance remained stable.

Unlike other approaches that require human input for specific biases, the MIT team’s algorithm is able to test datasets, identify any biases and automatically retrieve template again without needing a programmer in the loop.

“Facial classification in particular is a technology that’s often seen as ‘solved,’ even as it’s become clear that the datasets being used often aren’t properly vetted,” said Alexander Amini, a co-author of the study.

“Rectifying these issues is especially important as we start to see these kinds of algorithms being used in security, law enforcement and other domains.”

According to Amini, the de-bias algorithm would be particularly relevant for very large datasets which would be too expansive to be vetted by humans.

Giving AI the capability of reasoning and adaptability will be a breakthrough for the industry. but it also means AI will be given a significant control of itself and its actions, which without thorough consideration, might lead to unforeseen consequences. This problem requires monitoring and regulations on AI. Currently, professors and researchers at MDI are working on building an ethical framework for AI to guarantee the safety of AI deployment.

by BGF | Feb 4, 2019 | News

The U.S. Justice Department unsealed two indictments against China’s Huawei Technologies Co Ltd.

One indictment involves a case of allegedly stealing trade secrets from T-Mobile, an American wireless carrier, by the company on behalf of a U.S. Huawei subsidiary.

In a civil lawsuit in 2017, a Huawei employee was found to have swiped one of the arms of a robotic phone-testing device named “Tappy”, owned by T-Mobile.

Seattle jury asked Huawei to pay compensation of $4.8m to T-Mobile. The court has discovered, however, “neither damage, unjust enrichment nor willful and malicious conduct by Huawei”.

Huawei was also accused of engaging in deceitful financial practices through the operations of a subsidiary with four big banks (HSBC is one of those) that violated international sanctions on Iran.

Meng Wanzhou, the chief financial officer of China tech giant Huawei, was arrested by Canadian police, on December 1st, on behalf of the American authorities. The U.S formally requests Meng Wanzhou’s extradition. The Canadian Department of Justice will have 30 days to decide whether to commence the extradition process.

In the face of the rapid development of technology in general and artificial intelligence (AI) in particular, the risk of developing beyond the current framework is entirely possible. The Michael Dukakis Institute (MDI) has been developing the Artificial Intelligence World Society (AIWS) with the 7-layer AIWS Model; at the same time, MDI and AIWS established the AIWS Standards and Practice Committee with the goal of developing standards for an artificial intelligence citizen.