Agenda of United Nations Academic Impact Charter Day Lecture

Agenda of United Nations Academic Impact Charter Day Lecture

Agenda of United Nations Academic Impact Charter Day Lecture

OK. Hello. My name’s Alex Perry Well I’m a professor at M.I.T.. And I run a group. Called connection science which actually develops techniques and. Open source software. For helping countries. And. Companies. Deal with A.I. in a way that is both effective. And efficient. But also ethical. And. The key idea is. That in this new world we’re entering as you have been everywhere. Most of the day was in private hands in the hands of. Companies. By yet you need that data to be able. For it. To be able to run government to be able to be efficient about social systems. Civic systems. How are you going to do that in a way that’s trustworthy unbiased fair. And that the people understand. What’s happening. And. So. I’ve developed a method that I call. Open algorithms. That allows you to take data from private entities like companies. And combine it. In a safe way. To produce. Data. About. Entire countries or other large structures large. International companies etc.. In a way that’s safe. Auditable. And. Understandable. So. The drivers of this are things like. Security. If you put all the data in one spot it’s sure to get stolen. An enormous risk. It’s. Inertia. Different people own the data. And. With privacy concerns. Individuals. Own the data in a certain sense. They should be able to know what’s happening. They should have control over data. About and. How do you put this together. The big. Idea is that instead of putting data on one place you keep data where it’s originally collected. This is you. Federated data. Rather than concentrated. So the people that have. Ownership. Rights over data. Continue to hold on to the data. But they agree. To. Algorithmically answer questions. So. The computer that data is on is also willing to answer questions. For the public good. Or for. The Joint Group. Those questions are called. Algorithms. And. In our system we call these open algorithms because. They’re things that are. Legally agreed to beforehand. So instead of going to someone to say can you give me your data and you say. Well here’s an algorithm I’ll show it to you. Can you run this on their data and give me the answer. Legally and organizationally that’s a much easier thing to do. And in fact. Small countries like Estonia have been doing it for a long time. And big companies. Like. AT&T. Do it as a matter of self-protection. To make sure that they’re not breaking the law. Well it sounds complicated to the human. Ear. I’m a computer point of view. Reading the data where it’s collected and answering questions. Is. In many cases more efficient than moving the data to one place and answering the questions there. The difference is is that it’s a lot safer to leave the data wherever it’s collected. Military people figured this out a long time ago it started building a castle with nodes. Which. In today’s computer Oracle firewalls and they work to defense and debt. That’s what we do with. Data. When you do that all of a sudden you can keep track of what questions are being asked and what data. And what people who collected the data and the people who already. Have the ability to monitor if the. Questions are ones that they agree to. And. So you can record what’s happening in the system is orderly. So if you have a question about bias or fairness. You can go. And. Answer that question because now you have a record. Of what was done with the data. And who did it. So for instance. In the country of Columbia we were able to look at. Know. Poverty programs. And discover that there were almost a million people. Who were getting benefits that shouldn’t. And a million people that weren’t getting benefits. Sure. And that comes with the ability to. Audit. All. The decisions. Which are going to human ears sounds complicated but actually. Computer point of view. It’s just a dashboard that keeps track of what’s happened. So. That’s the sort of big view is that instead of having. One centralized control one centralized repository. You have a federation. Of different. Players. And their interests that agree to answer certain questions for certain functions. And you ordered them. So. As I said there are some countries like Estonia that are doing more. Recently. Europe. Agreed. Eurostat the official data. Organizations of all. EU countries. Adopted this sort of framework. And there are several other countries that we’re working with. Israel and Australia. Others. That are also. Putting pilots in this to be able to explore. How they can get better insights about their country. And had better policies. By using this public and private data together. In an. Open. Honorable way. So. That’s the key thing. We’re. Deploying these things in different place parts of the world. We’d be happy to help you if you’re interested. People are typically interested in things like. Social programs being more efficient being able to have greater income from tourists or from. Innovation. Or new sorts of civic systems better transportation and public health. And we help build those. Things for people. We don’t know turnkey. Solutions what we do. Is we build. Prototypes. That are then. Specialized to be operational for your particular situation. And if you want to know more about this I refer you to for instance. The keynote again for the EU presidency. Or. Other sorts of talks like that that. Will be making available. To you. And we have a book called trust data. Which describes the techniques that includes the. Piece that the Obama White House asked us to do for them. The piece that the UN secretary general has. A piece for The World Economic Forum. Describing both the policy the legal and the technical aspects. Interestingly the Chinese central government just translated this into China on. Chinese and published it through the. Chinese. Central and economic press. So you might be interested in. The. Problem. So that’s the top level story here. I hope you’re interested we’d be happy to talk to you and. And work with you. Thank you

After the great success of the AIWS–G7 Summit Conference with the AIWS-G7 Summit Initiative, Boston Global Forum organize AI World Society (AIWS) Summit to engage governmental leaders, thought leaders, policymakers, scholars, civic-societies, and non-government organizations to build a peaceful, safe, and new democracy for the world with deeply applied AI,. Prominent figures often very busy, so many could not meet the same time and same place, therefore BGF gives a new format for AIWS Summit: combining online and offline.

Alliance of civic societies, non-government organizations, and thought leaders for a safe, peaceful, and Next Generation Democracy.

Mission:

A high level international discussion about AI governance for a safe, peaceful, and Next Generation Democracy.

Organized by Boston Global Forum, and World Leadership Alliance-Club de Madrid, and sponsored by the government of the Commonwealth of Massachusetts.

Outcome: recommendations, suggestions for initiatives, solutions, and policies to build a society and world more peaceful, safer, and democratic with AI; the new social and economy revolution with AI that will shape better and bright futures in equality of opportunities in contribution, transparency, openness, in which capital and wealth cannot corrupt democracy, citizens will be recognized, rewarded and have a good life.

Format:

Combine between online and offline.

Moderators: Governor Michael Dukakis, and Nguyen Anh Tuan

Speakers: leaders of governments, political leaders, business leaders, prominent professors, thought leaders. Governor Michael Dukakis will send invitation letters to speakers to introduce mission, topics, outcome of the AI World Society Summit 2019.

Speakers can send their talks by video clip (maximum 30 minutes) or text to Content Team of the AI World Society Summit 2019, then the Content Team will post to AI World Society Summit section of Boston Global Forum’s website and deliver to other speakers, and discussants, and then their talks will be submitted to G7 Summit 2019 as a part of AIWS-G7 Summit Initiative.

Time: start April 25, 2019 at AI World Society – G7 Summit Conference to August 5, 2019.

The first speaker is oneof Fathers of Internet, Vint Cerf, Vice Prresident and Chief Internet Evangalist of Google

The second speaker is Professor Neil Gershenfeld, MIT.

Vint Cerf: One of the Fathers of the Internet Received World Leader in AIWS Award at the BGF-G7 Summit Conference on April 25, 2019 at Harvard University Faculty Club.

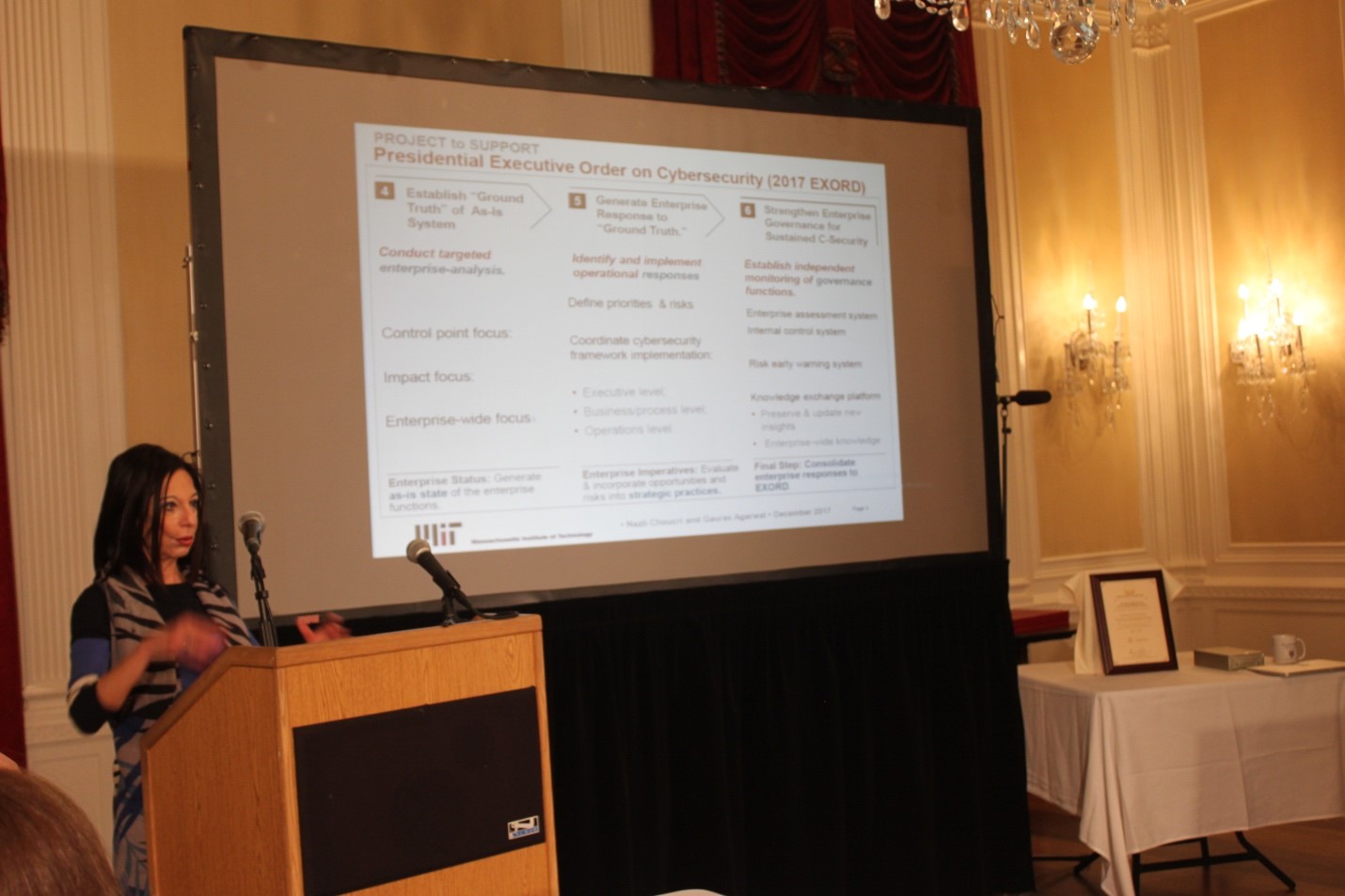

Professor Nazli Choucri, MIT, Board Member of the Boston Global Forum and Michael Dukakis Institute for Leadership and Innovation, also a very active member of AIWS Standards and Practice Committee, has launched a new book:” International Relations in the Cyber Age “.

“The international system of sovereign states has evolved slowly since the seventeenth century, but the transnational global cyber system has grown rapidly in just a few decades. Two respected scholars – a computer scientist and a political scientist-have joined their complementary talents in a bold and important exploration of this crucial co-evolution.”

– Joseph S. Nye, Harvard Kennedy School and author of The Future of Power

“Many have observed that the explosive growth of the Internet and digital technology have reshaped longstanding global structures of governance and cooperation. International Relations in the Cyber Age astutely recasts that unilateral narrative into one of co-evolution, exploring the mutually transformational relationship between international relations and cyberspace.”

– Jonathan Zittrain, George Bemis Professor of International Law and Professor of Computer Science, Harvard University

“Cyber architecture is now a proxy for political power. A leading political scientist and pioneering Internet designer masterfully explain how ‘high politics’ intertwine with Internet control points that lack any natural correspondence to the State. This book is a wake-up call about the collision and now indistinguishability between two worlds.”

– Laura Denardis, Professor. American University and author, The Global War for Internet Governance

“This book uniquely combines the perspectives of an Internet pioneer (Clark) and a leading political scientist with expertise in cybersecurity (Choucri) to produce a very rich account of how cyberspace impacts international relations, and vice versa. It is a valuable contribution to our understanding of Internet governance.”

– Jack Goldsmith, Henry Shattuck Professor, Harvard Law School

About this book

A foundational analysis of the co-evolution of the internet and international relations, examining resultant challenges for individuals, organizations, firms, and states.

In our increasingly digital world, data flows define the international landscape as much as the flow of materials and people. How is cyberspace shaping international relations, and how are international relations shaping cyberspace? In this book, Nazli Choucri and David D. Clark offer a foundational analysis of the co-evolution of cyberspace (with the internet as its core) and international relations, examining resultant challenges for individuals, organizations, and states.

The authors examine the pervasiveness of power and politics in the digital realm, finding that the internet is evolving much faster than the tools for regulating it. This creates a “co-evolution dilemma”—a new reality in which digital interactions have enabled weaker actors to influence or threaten stronger actors, including the traditional state powers. Choucri and Clark develop new methods of analysis. For example, one method is about control in the internet age, “control point analysis,” and apply it to a variety of situations, including major actors in the international and digital realms: the United States, China, and Google. Another is about network analysis of international law for cyber operations. A third method is to measure the propensity of states to expand their influence in the “real” world compared to expansion in the cyber domain. In so doing so they lay the groundwork for a new international relations theory that reflects the reality in which we live—one in which the international and digital realms are inextricably linked and evolving together.

Authors

Nazli Choucri

Nazli Choucri is Professor of Political Science at MIT, Faculty Affiliate at the MIT institute for Data Science and Society, Director of the Global System for Sustainable Development (GSSD), and the author of Cyberpolitics in International Relations (MIT Press).

David D. Clark

David D. Clark is a Senior Research Scientist at the MIT Computer Science and Artificial Intelligence Lab and a leader in the design of the Internet since the 1970s.