by BGF | Nov 2, 2018 | News

– By Llewellyn King, Executive Producer and Host

“White House Chronicle” on PBS –

Dr. Elliot Salloway, Executive Social Media Director of the Boston Global Forum

Elliot Salloway was my friend but, in his way, he was a friend of everyone.

Elliot was committed — albeit with a gleam in his eye and a spring in his step — to making the world a better place any way he could.

He was preoccupied with genocide and its causes and devoted his last several years to working on “empathy” as a teachable subject. He believed if you could get the young to think empathetically, you could avoid a lot of misery in conflict zones. Even while cancer was chewing up his body, he worked to support the teaching of empathy in Africa.

He was a highly empathetic man himself. I met him at my first attendance at the Boston Global Forum, and he drove me from Harvard Square to South Station to get a train to Providence, R.I. Trains came and went. He parked his car and we talked — and talked and talked. It was as though we had gone middle school together. Elliot was not just a friend, he was a total friend.

His life was as incredible as it was full. His father worked in a tanning factory and died early from the poisons which were part of that trade at that time. Elliot told me he vowed not to suffer the same fate; he went to dental school and became the first periodontist in Worcester, Mass. Earlier he did his military service in the Air Force as an officer-dentist.

But he did more than treat patients. He taught at Harvard for 30 years, and was an avid blue-water sailor, race car driver and tennis player.

He learned photography (he said street photography was his favorite) and painting and exhibited his work. All his art reflected his life’s passion: the contrast between good and evil. Every painting shows good balancing evil.

He and his wife Regina raised three sons and continued their life in Worcester — when he wasn’t in the driver’s seat of a race car, at the helm of a yacht, dabbing paint on a canvas, or looking through a camera’s viewfinder.

When the Boston Global Forum was getting underway, Tuan asked Elliot to be the chief operating officer. He threw himself into that with brio, as he did everything else.

Photo: Eliot Salloway (far left) at Boston Global Forum meeting in 2013 at Harvard University

In the end, if kindness is the greatest thing that can be said of a man, and I think it is, then Elliot was great that way as well as a physician, a father and a teacher of men. He was a man of action with a heart as big as the oceans he sailed. He had fun. He was fun.

Elliot Salloway was an incandescent presence wherever he was, and I am one of a legion who will miss him.

by BGF | Oct 28, 2018 | News

On October 23rd, 2018, The Public Voice Symposium on AI, Ethics, and Fundamental Rights was successfully completed under the moderation of “Public Voice Coalition”. This is a meaningful event held in conjunction with the 40th International Conference of Data Protection and Privacy Commissioners.

Photo: Speakers and Guest Panel at the Public Voice Symposium

A broad coalition of civil society organizations under the umbrella of the “Public Voice Coalition” jointly hosted the event. The event consisted of 3 parts: the first part is keynote speech from Professor Anita Allen, Vice Provost for Faculty, Henry R. Silverman Professor of Law, and Professor of Philosophy at University of Pennsylvania Law School. Following the keynote presentation, 2 panels discussing its impacts on human rights, consumer protection, competition; on how to enforce existing laws in the age of AI; on the relationship between ethics and the law and more. The first panel was moderated by Ms. Anna Fielder, Senior Policy Advisor, Trans Atlantic Consumer Dialogue (TACD), focusing on AI’s impacts on established legal frameworks. Panel 2, under the moderation of President Marc Rotenberg, Electronic Privacy Information Center (EPIC), who is also a member of the AIWS Standards and Practice Committee, covered the relationship of ethics and the law in the realm of AI. Especially, Panel 2 had the presence of Ms. Elizabeth Denham, UK Information Commissioner, Information Commissioner’s Office (ICO); Ms. Helen Dixon, Data Protection Commissioner for Ireland; Ms. Andrea Jelinek, Chair, European Data Protection Board, and Head, Austria Data Protection Authority, and Mr. Nguyen Anh Tuan, Director of the Michael Dukakis Institute for Leadership and Innovation (MDI).

Photo: Guest Panel at the Public Voice Symposium

Photo: Mr. Nguyen Anh Tuan, Director of Michael Dukakis Institute for Leadership and Innovation (MDI), and CEO of Boston Global Forum(BGF), is in the panel discussion at the 2018 Public Voice Symposium

The panelists officially published the Universal Guidelines for Artificial Intelligence, which are proposals to inform and improve the design and use of AI at the symposium. The Guidelines are intended to maximize the benefits of AI, to minimize the risk, and to ensure the protection of human rights. These Guidelines should be incorporated into ethical standards, adopted in national law and international agreements, and built into the design of systems. The Guidelines play an important role in finding a common voice on AI ethics by leaders in regions – also considered a Charter of the AIWS.

by BGF | Oct 28, 2018 | News

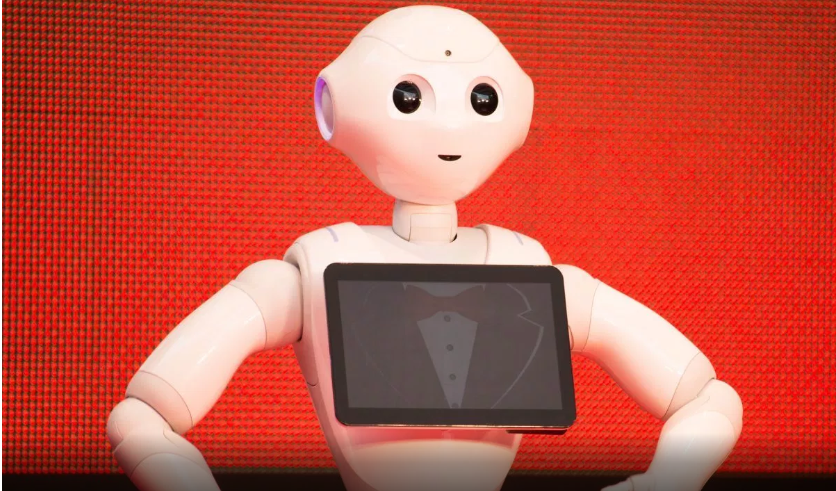

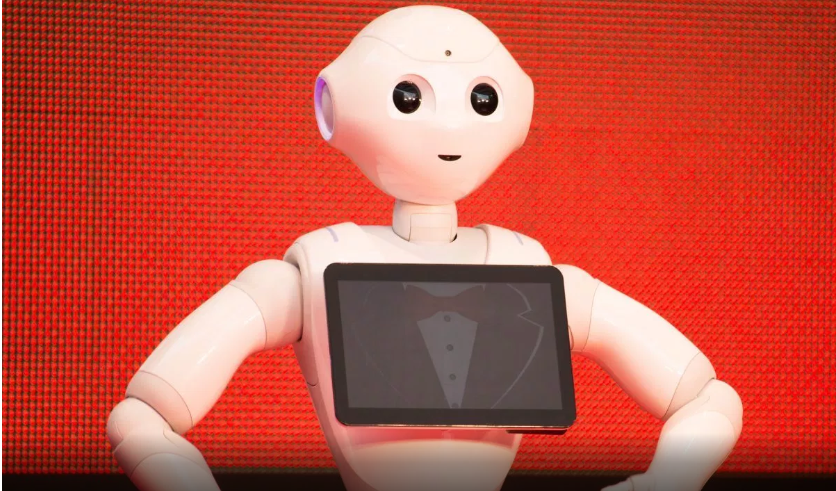

Softbank developed a robot named Pepper to be the first humanoid to testify in front of the UK Parliament about AI.

Since the beginning of the fourth industrial, considerable changes have been made, bringing about benefits as well as challenges for human. One of the biggest debates was around the rise of AI and robotics, which is considered to alter society significantly. There have been a lot of explanation about the development of AI and robotics by leaders, researchers, experts but rarely do we hear it from the robots themselves. “If we’ve got the march of the robots, we perhaps need the march of the robots to our select committee to give evidence,” Committee chair Robert Halfon of The Commons Education Select Committee said.

Hence, Pepper will be the one to testify for AI and Robotics to the Commons Education Select Committee. With microphones, two HD cameras, touchscreen on its chest as illustrators, The Robot will help answering the Committee questions about Industry 4.0.

“This is not about someone bringing an electronic toy robot and doing a demonstration,” said Mr. Halfon. “It’s about showing the potential of robotics and artificial intelligence and the impact it has on skills.”

Whether it is for better or worse, the future of AI will mainly lie on the hand of its developers – we, human to decide. Hence, a certain set of guidelines on ethics and standards is needed for developers to follow, not just what to make but also how to make it ethical. This is exactly what Michael Dukakis Institute for Leadership and Innovation (MDI) is attempting to do. So far, the organization has been working on developing the AIWS Index for governments and enterprises.

by BGF | Oct 28, 2018 | News

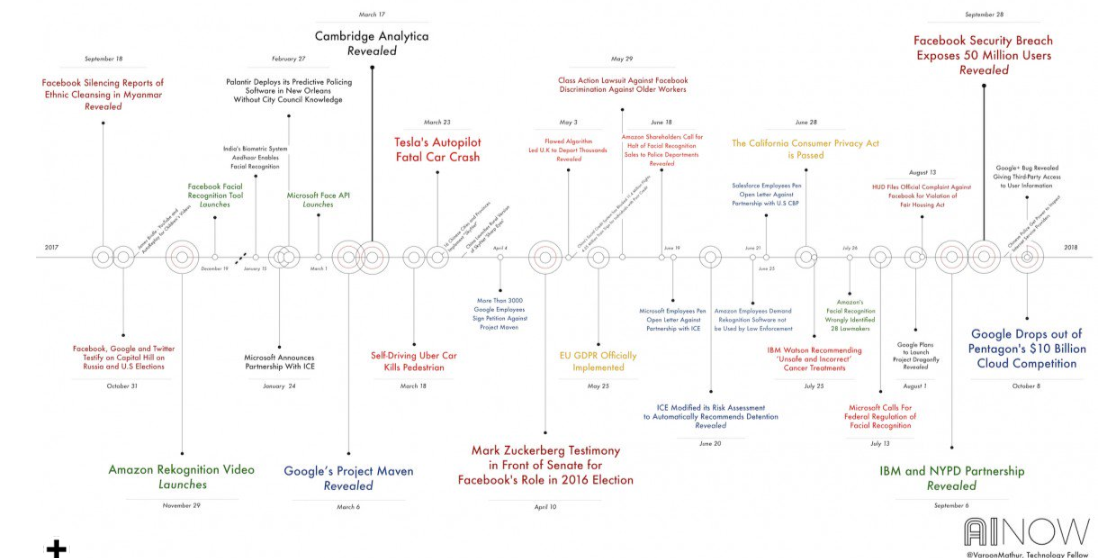

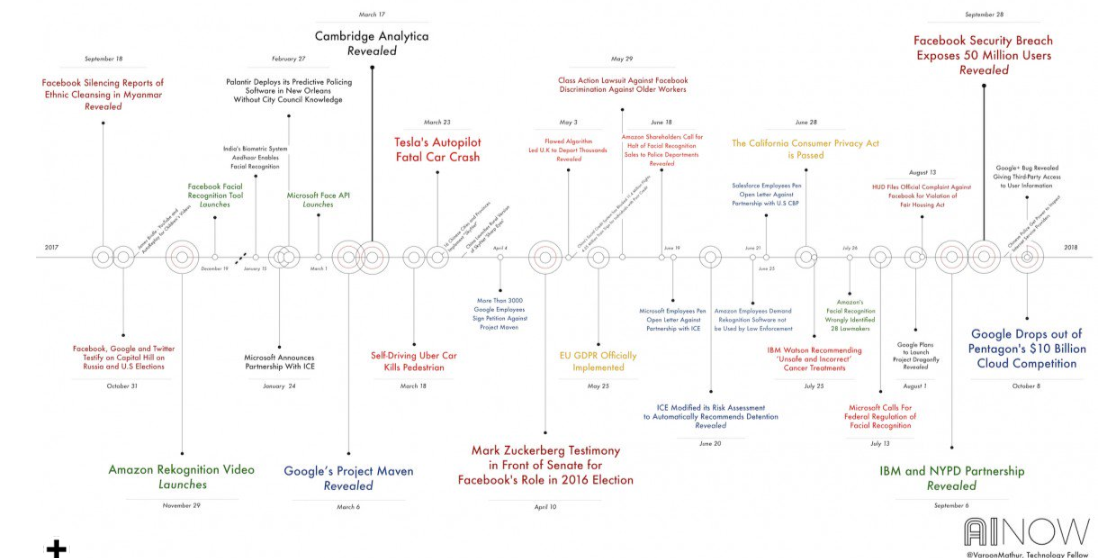

As people’s morals are different depending on their origin and class, some legal scholars argue ethics are too subjective for AI to follow in the conversation at the AI Now 2018 Symposium.

It is essential that AI need to follow standards to ensure the safety of human, the same goes to its developers, which has been emphasized by researchers, leaders, activists around the world. But who gets to define and enforce those ethics?

MIT Researchers have developed a platform known as the Moral Machine, which is used to look into the divergence of people’s opinions on driving situation and ask what they prioritize in an accident. The result from millions of people around the world shows that there is a huge variation across cultures. Moreover, it was proved by North Carolina State University that reading ethical standards actually doesn’t affect people’s behaviors, which means after reading a code of ethics, software engineers still does not do anything differently.

In the discussion, a solution was proposed to deal with the ambiguous and unaccountable nature of ethics: reframing AI-driven consequences in terms of human rights, suggested by an international legal scholar at NYU’s School of Law, Philip Alston. “[Human rights are] in the constitution,” said Alston. “They’re in the bill of rights; they’ve been interpreted by courts, if an AI system takes away people’s basic rights, then it should not be acceptable,” he added.

The Michael Dukakis Institute is currently collaborating with AI World to publish reports and programs on AI-Government, including AIWS Index and AIWS Products to solve key problems in ethical AI faced by businesses, governments and the world at large. Notably, this year the Michael Dukakis Global Initiative is the International Sponsor of AI World – aiworld.com – coming to Boston on Dec 3-5, 2018. AI World Conference & Expo draws over 3,000 businesses and technology professionals from Global 2000 enterprises, and includes 100+ sessions, 200+ speakers and 85+ sponsors and exhibitors. AI World is the largest independent enterprise AI business event in the world. The conference program and special events are focused on how AI is helping to drive business innovation at large companies and covers the complete state of the practice of AI today. AI World is organized around this singular goal, enabling business leaders to build a competitive advantage, drive new business models and opportunities, reduce operational costs and accelerate innovation efforts.

Link of the AI World Conference & Expo Event: https://aiworld.com/

by BGF | Oct 28, 2018 | News

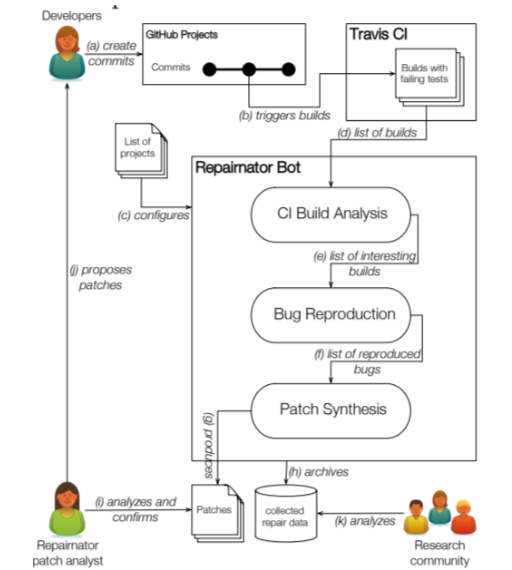

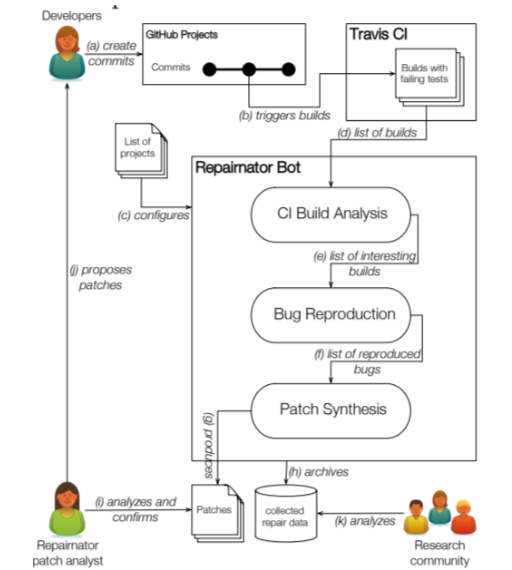

KTH Royal Institute of Technology in Stockholm has built a bot called Repairnator that surpass human in finding bugs and writing bug fixes.

A robot programmer known as Repairnator, can fix bugs so well that it can compete with human engineers. This can be considered a milestone in software engineering research on automatic program repair for developers.

Martin Monperrus and his team – the creators of the bot tested it by having Repairnator pretend to be a human developer and allow it to compete with other human developers to patch on GitHub, a website for developers. “The key idea of Repairnator is to automatically generate patches that repair build failures, then to show them to human developers, to finally see whether those human developers would accept them as valid contributions to the code base,” said Monperrus.

The team created a GitHub user called Luc Escape which disguised as a software engineer at their research facility. “Luc has a profile picture and looks like a junior developer, eager to make open-source contributions on GitHub,” they say. Then, they customized Repairnator as Luc whose appearance like a junior developer.

Repairnator were put through 2 tests. The first took place from February to December 2017, which required the bot to fix 14,188 GitHub projects, and scan for errors. In the given period, Repairnator analyzed over 11,500 builds with failures among 11,500 failures, it was able to reproduce failures in 3,000 cases. and developed a patch in 15 cases. However, none of those was usable because it took Repairnator a great deal of time to develop them and the low-quality of the patches was not acceptable.

In the second test, “Luc” was set to work on the Travis continuous integration service from January to June 2018. After a few adjustments, on January 12 it wrote a patch that a human moderator accepted into a build. In six months, Repairnator went on to produce five patches that are acceptable, which means it can actually compete with human in this field of work. However, they encountered an issue when the team received the following message from one of the developers: “We can only accept pull-requests which come from users who signed the Eclipse Foundation Contributor License Agreement.” Since Luc cannot sign a license agreement. “Who owns the intellectual property and responsibility of a bot contribution: the robot operator, the bot implementer or the repair algorithm designer?” asked Monperrus and his colleagues. The bot could bring about enormous potential to the industry; however, this can pose challenges to rights and intellectual property and responsibility.

The issues related to rights and responsibilities of AI systems are studied in the AIWS Initiative of the Michael Dukakis Institute for Leadership and Innovation (MDI). Layer 3 of The AIWS 7-Layer Model focuses primarily on AI development and resources, including data governance, accountability, development standards, and the responsibility for all practitioners involved directly or indirectly in creating AI.