by BGF | Oct 28, 2018 | News

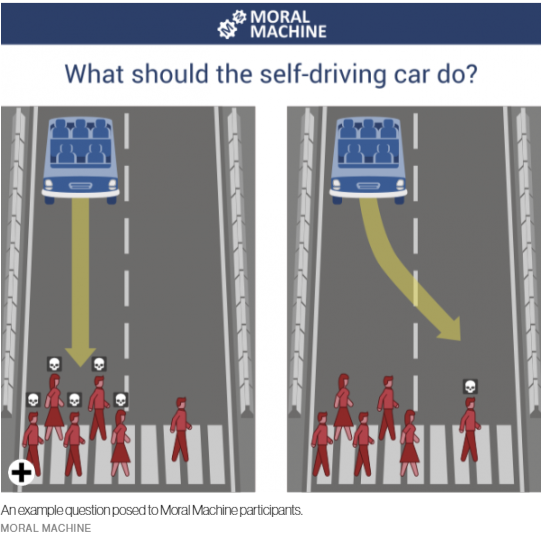

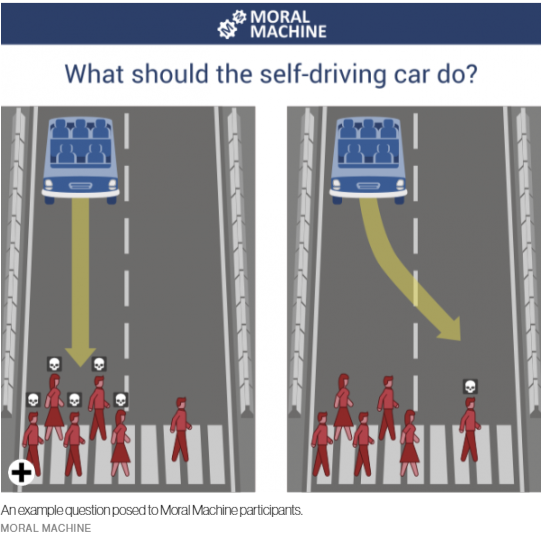

In 2014, The Moral Machine was launched by MIT Media Lab to generate data on people’s insight into priorities from different cultures.

After four years of research, millions of people in 233 countries have made 40 million decisions, making it one of the largest studies ever done on global moral preferences. A “trolley problem” was created to test people’s opinion. It put people in a case where they are on a highway, then must encounter different people on the road. Which life should you take to spare the others’ lives? The car is put into 9 different circumstance should a self-driving car prioritize humans over pets, passengers over pedestrians, more lives over fewer, women over men, young over old, fit over sickly, higher social status over lower, law-abiders over law-benders.

The data reveals that the ethical standards in AI varies across different culture, economics and geographic location. While collectivist cultures like China and Japan are more likely to spare the old, countries with more individualistic cultures are more likely to spare the young.

In terms of economics, participants from low-income countries are more tolerant of jaywalkers versus pedestrians who cross legally. However, in countries with a high level of economic inequality, there is a gap between the way they treat individuals with high and low social status.

Different from the past, today we have machines that can learn through experience and have minds of their own. This requires comprehensive standards to regulate and control unpredictable disruptions from Artificial Intelligence, which is also the shared aim of researches conducted by serious and major independent organizations such as the MIT Media Lab, IEEE, etc. and the Michael Dukakis Institute.

by BGF | Oct 28, 2018 | News, Practices in Cybersecurity

Mikko Hypponen, a renowned authority on computer security and the Chief Research Officer of F Secure, was invited by Tomorrow’s Capital to discuss cybercrime and its pace of thriving.

Mikko Hypponen – The Chief Research Officer of F Secure, who was awarded the Practitioner in Cybersecurity for his great work and active contribution to the public’s knowledge of cybersecurity by the Boston Global Forum and the Michael Dukakis Institute on December 12, 2015.

At the beginning of October, Mikko Hypponen was invited to talk in a podcast of Nasdaq’s Tomorrow’s Capital about cybercrime. In his speech, he addressed the increase of criminal due to cryptocurrencies since it is easier to target and steal. Furthermore, it leads to cases where people who hold crypto are targeted or even killed, and after that everything is untraceable for local authorities because of its autonomous characteristic.

Beyond of that, Internet of Things is also become another concern, now every devices in our daily lives are connected as well, normally through a smart app in your smartphone which connects everything else. “Let’s say they’ve bought an IoT washing machine and they are told that it’s hackable. Well, what they think is that, well, I don’t care. It’s a washing machine…Well, that’s not the point. They are not hacking your washing machine or your fridges to gain access to your washing machine or to your fridge. They are hacking those devices to gain access to your network.” said Mikko.

That means not only financial institution is at risk nowadays but also social infrastructures and even our privacy. Therefore, Hypponen emphasizes that cybersecurity efforts should not be overlooked. It’s a new world, one that requires new ideas, innovations, and tactics to ensure a safer future.

by BGF | Oct 28, 2018 | News

On 27-28 March, Dr. Vaira Vīķe-Freiberga, President of the World Leadership Alliance – Club de Madrid, during her trip to the United Kingdom, she participated in the events that marked the Centenary of Latvia’s statehood in London and Edinburgh.

Dr. Vaira Viķe-Freiberga is in the middle of the photo. She is former President of Latvia (1999-2007) and currently President of the World Leadership Alliance – Club de Madrid – which is in partnership with the Michael Dukakis Institute for Leadership and Innovation to the AIWS 7-Layer Model to Build Next Generation Democracy.

President Vaira Viķe-Freiberga took part in the events that made a milestone of 100-year statehood in London and Edinburgh. In the talk, Vaira Vike-Freiberga put an emphasis on the nation’s key values: freedom, democracy and equality.

On 27 March, the discussion was held by the Embassy of Latvia and the European Bank for Reconstruction and Development (EBRD). In the conversation, with the presence of Sergei Guriev, EBRD Chief Economist, and Alexia Latortue, EBRD Managing Director for Corporate Strategy. Dr. Vīķe-Freiberga presented about the theme of “Women, Development and Democracy”. She explained the mechanisms of social stigmatization and their negative influence on the development of society.

On the next day, Dr. Vīķe-Freiberga delivered a lecture on “Values and Democracy in the Contemporary World” to students and professionals in the University of Edinburgh. Not only did Dr. Vīķe-Freiberga explained to her audience the place of the Latvian people in the context of the development of European civilisation and values, but also she noted the controversial debate on the application in Latvia of the Istanbul Convention (the Council of Europe Convention on preventing and combating violence against women and domestic violence). She strongly emphasized that liberal values did no run counter to family values. This event is considered one of the university’s most visited events in recent years.

by BGF | Oct 21, 2018 | 7-Layer Model of AI World Society

Professor Marc Rotenberg, President of Electronic Privacy Information Center (EPIC), Member of Michael Dukakis Institute’s AIWS Standards and Practice Committee recently published the Universal Guidelines for AI. It will be opened for public sign-on and later released at the Public Voice event in Brussels on October 23, 2018.

The emergence of AI is transforming the world, steering science and industry to government administration and finance on a whole new direction. The rise of AI decision-making also implicates fundamental rights of fairness, accountability, and democracy. Many of them are unclear to users, leaving them unaware whether the decisions were accurate.

Aware of the current situation, Prof. Marc Rotenberg – President of Electronic Privacy Information Center (EPIC) proposed these Universal Guidelines to guide the design and use of AI, which serve as a part of the first layer of AIWS 7-layer Model built by Michael Dukakis Institute. The goals of this model are to create a society of AI for a better world and to ensure peace, security, and prosperity.

These guidelines should be incorporated into ethical standards, adopted in national law and international agreements, and built into the design of systems. The guidelines include twelve terms:

- Right to Transparency. All individuals have the right to know the basis of an AI decision that concerns them. This includes access to the factors, the logic, and techniques that produced the outcome.

- Right to Human Determination. All individuals have the right to a final determination made by a person.

- Identification Obligation. The true operator of an AI system must be made known to the public.

- Accountability Obligation. Institutions must be responsible for decisions made by an AI system.

- Fairness Obligation. Institutions must ensure that AI systems do not reflect bias or make impermissible discriminatory decisions.

- Accuracy, Reliability, Validity, and Replicability Obligations. Institutions must ensure the accuracy, reliability, validity, and replicability of decisions.

- Data Quality Obligation. Institutions must ensure data provenance, quality, and relevance for the data input into algorithms. Secondary uses of data collected for AI processing must not exceed the original purpose of collection.

- Public Safety Obligation. Institutions must assess the public safety risks that arise from the deployment of AI systems that direct or control physical devices.

- Cybersecurity Obligation. Institutions must secure AI systems against cybersecurity threats.

- Prohibition on Secret Profiling. No institution shall establish or maintain a secret profile on an individual.

- Prohibition on National Scoring. No national government shall establish or maintain a score on its citizens or residents

- Termination Obligation. An institution that has established an AI system has an affirmative obligation to terminate the system if it will lose control of the system

The Universal Guidelines for AI is currently open to public sign-on by individuals and organizations. On October 23, it will be introduced at the Public Voice event in Brussels.

by BGF | Oct 21, 2018 | News

The AIWS Standards and Practice Committee of Michael Dukakis Institute welcomes two new members: Prof. Mikhail Kupriyanov – Saint Petersburg Electrotechnical University “LETI”, Russia and Prof. Thomas Creely – U.S. Naval War College.

The Committee is established with responsibilities to:

- Update and collect information on threats and potential harm posed by AI.

- Connect companies, universities, and governments to find ways to prevent threats and potential harm.

- Engage in the audit of behaviors and decisions in the creation of AI.

- Create both an Index and Report about AI threats – and identify the source of threats.

- Create a Report on respect for, and application of, ethics codes and standards of governments, companies, universities, individuals and all others…

There are 23 members of the AIWS Standards and Practice Committee found by Governor Michael Dukakis. We are delightful to introduce our 2 newest members.

The first one is Prof. Mikhail Kupriyanov. He is an expert in the field of intellectual methods for data and process analysis, artificial intelligence, and embedded systems. Since 1993, he has been working in the position of professor at the Department of Computer Engineering, LETI. And since 2015, he is a head of the Department. Since 2010, he has been occupying the position of the head of the Computer Technologies and Informatics Faculty at LETI. He has been occupying the position of the Director of Education Department since 2018.

The second is Prof. Thomas Creely – Associate Professor of Ethics, Director of Ethics & Emerging Military Technology Graduate Program. He serves on NATO Science and Technology Organization Technical Team. At Brown University Executive Master of Cybersecurity, he is lead for leadership and ethics. He also serves the Conference Board Global Business Conduct Council, Association for Practical & Professional Ethics Business Ethics Chair, and Robert S. Hartmann Institute Board.