by BGF | Apr 29, 2019 | News

Representatives of the French Government, French Consul General at Boston Arnaud Mentre received the Artificial Intelligence Society Initiative for the 2019 G7 Summit.

The AIWS – G7 Summit Initiative was announced on April 25, 2019 at AIWS-G7 Summit Conference at Loeb House, Harvard University.

The Initiative is The Next Generation Democracy – AI World Society. The AIWS Model envisions a society where creativity, innovation, tolerance, democracy, the rule of law, and individual rights are recognized and promoted, where AI is used to assist and improve government decision-making, and where AI is a mean of giving citizens a larger voice in governing.

The AIWS-G7 Summit Initiative has three parts: AI-Government, AI-Citizen, and AI Government Index.

The co-authors are Governor Michael Dukakis, Chairman of the Boston Global Forum; Mr. Nguyen Anh Tuan, CEO of Boston Global Forum; David Bray, Executive Director of The People-Centered Internet coalition; Professor Nazli Choucri, MIT; Professor Thomas Creely, Naval War College; Mr. Paul Nemitz, Principal Advisor, Directorate General for Justice and Consumers, the European Commission; Professor Thomas Patterson, Harvard; David Silbersweig, Harvard; President Vaira Vike-Freiberga, President of World Leadership Alliance-Club de Madrid; and Mr.Kazuo Yano, Chief Engineering of Hitachi.

Consul General of France Arnaud Mentre received the AIWS-G7 Summit Initiative at the Conference.

by BGF | Apr 29, 2019 | Event Updates

In the morning April 25, 2019, the Boston Global Forum (BGF) organized

AIWS-G7 Summit Conference at Loeb House, Harvard University.

The conference is sponsored by Government of Commonwealth of Massachusetts and supported by France Government.

Mr. Arnaud Mentre, Consul General of France in Boston, Representative of French Government, received the AIwS-G7Summit Initiative of BGF. He presented a keynote speech at the conference with topic “The French Perspective on Artificial Intelligence and the G7 Summit”.

On behalf of co-authors, Professor Thomas Patterson introduce AIWS-G7 Summit Initiative.

At the prestigious event, the Boston Global Forum honored one of Fathers of Internet, Vint Cerf as World Leader in AI World Society.

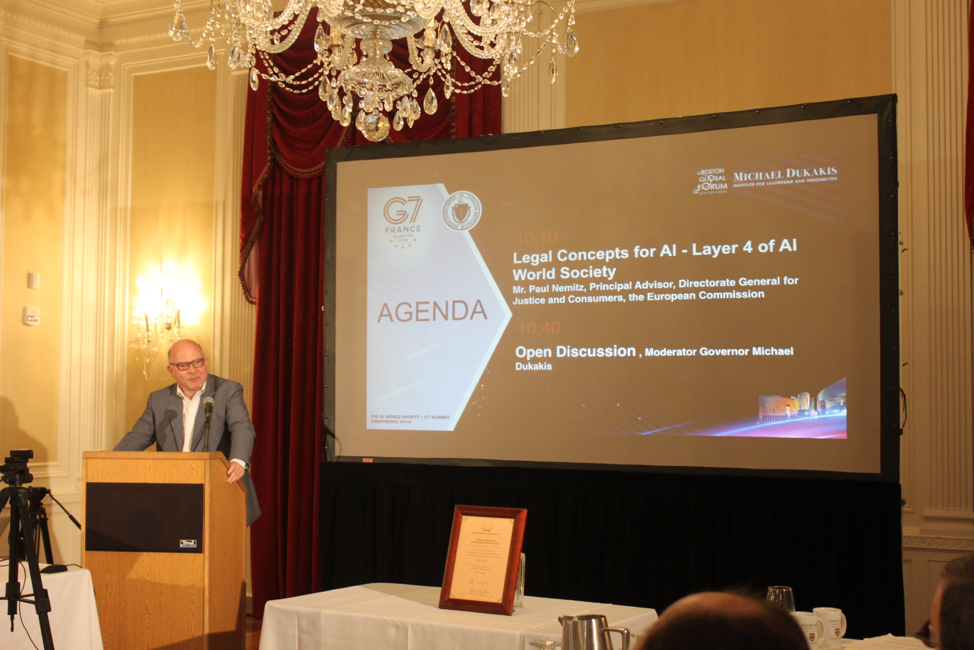

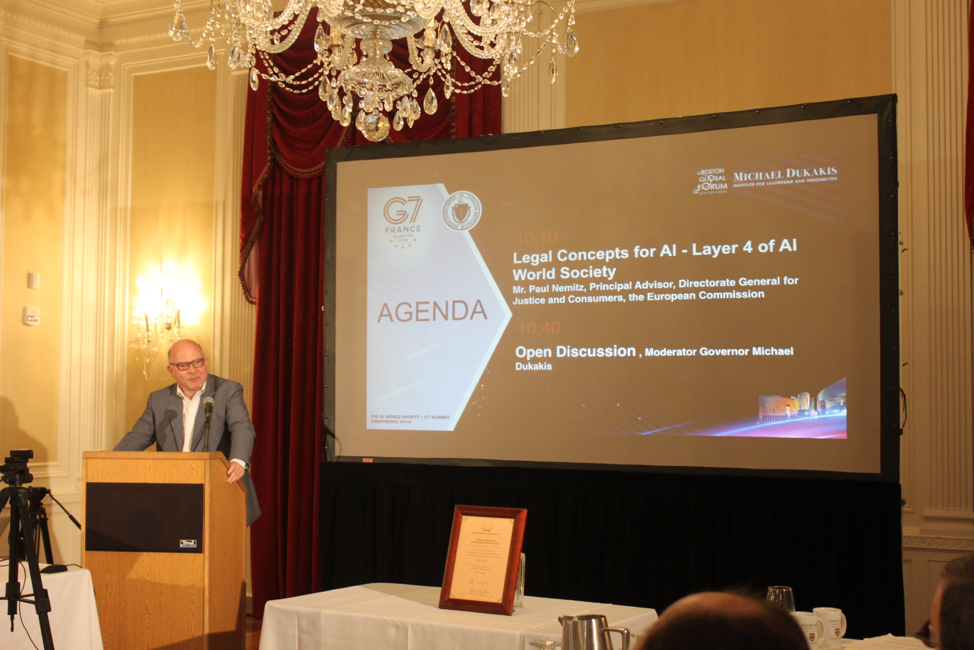

Mr. Paul Nemitz presented the principles of creating Artificial Intelligence Law, layer 4 of the 7-layer Artificial Intelligence Society model.

Mr. Paul Nemitz, presented Legal Concepts for AI – Layer 4 of AI World Society he introduces concepts of AI International Law. This is pioneer of AI Legal.

His precision gets very provocative discussion from professors of Harvard University, MIT include Professor Neil Gershenfeld, MIT etc.

Mr. Nam Pham, Assistant Secretary of Business Development and International Trade of Government of Massachusetts gave speech, committed Government of MA supports AIWS-Summit.

by BGF | Apr 29, 2019 | News

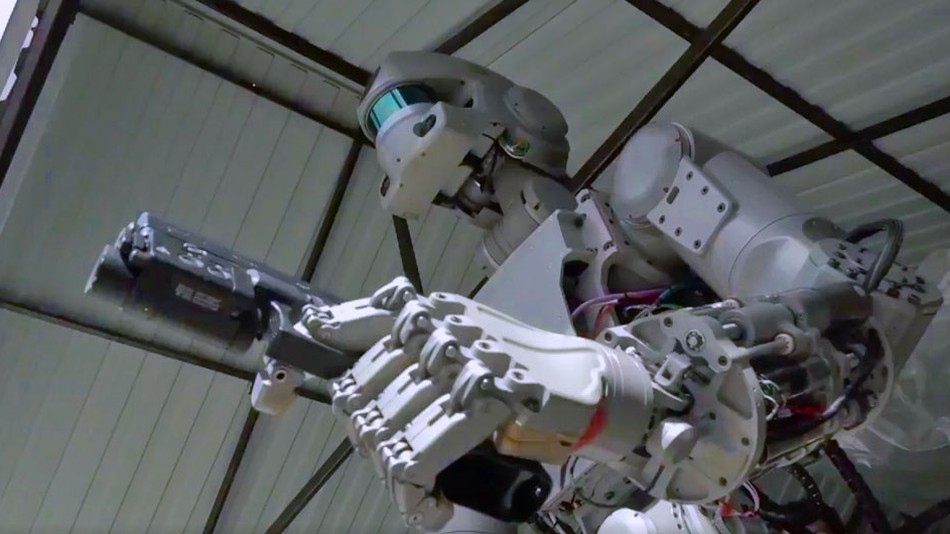

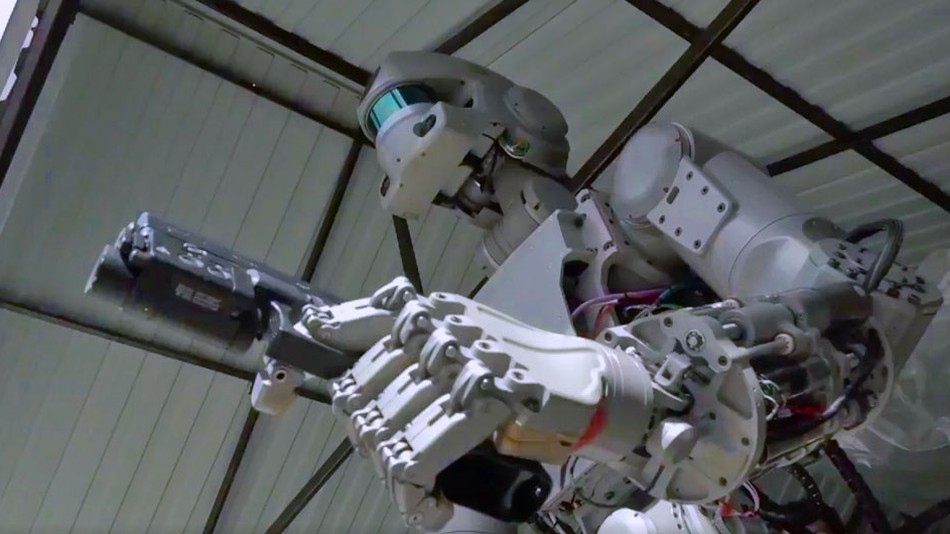

Two years ago, arms control advocates had reason for hope when scores of countries metat the United Nations in Geneva to discuss the future of lethal autonomous weapons systems, or LAWS. The main goal was to limit or regulate military AI.At the time, Russia was strongly against these efforts, arguing that “it is hardly acceptable for the work on LAWS to restrict the freedom to enjoy the benefits of autonomous technologies being the future of humankind” due to “the difficulty of making a clear distinction between civilian and military developments of autonomous systems based on the same technologies is still an essential obstacle in the discussion on LAWS.” (source: defenseone.com)

Fast forward to today and Russia is seemingly changingits stand on this issue. At least, that is according to a signal sent by Russian Security Council Secretary Nikolai Patrushev, who saidthis week, “we believe that it is necessary to activate the powers of the global community, chiefly at the UN venue, as quickly as possible to develop a comprehensive regulatory framework that would prevent the use of the specified [new] technologies for undermining national and international security.”

This reversal of course by Russia is interesting considering its strengthened support for AI use in military applications during the past two years. President Vladimir Putin even said that AI is necessary in weapons production.

Russian officials now seemed interested in standards for AI. What caused Russia to change its stand? Regardless of the answer, the AI World Society welcomes Russia’s softened approach to this important matter. We hope that our Comprehensive Report and Guidelineson AI Ethics could be helpful.

by BGF | Apr 29, 2019 | News

In recent years, researchers and journalists have highlighted artificial intelligence sometimes stumbling when it comes to minorities and women. Facial recognition technology, for example, is more likely to become confused when scanning dark-skinned women than light-skinned men.

Last week, AI Now, a research group at New York University, released a study about A.I.’s diversity crisis. The report said that a lack of diversity among the people who create artificial intelligence and in the data they use to train it has created huge shortcomings in the technology.

For example, 80% of university professors who specialize in A.I. are men, the report said. Meanwhile, at leading A.I. companies like Facebook, women comprise only 15% of the A.I. research staff while at Google, women account for only 10%.

Furthermore, Timnit Gebru, who is an A.I. researcher at Google, is cited in the report as saying “she was one of six black people—out of 8,500 attendees” at a leading A.I. conference in 2016.

The report’s authors believe that the problem of A.I. performing poorly with certain groups could be fixed if a more diverse set of eyeballs was involved in the technology’s development. And while tech companies say they are aware of the problem, they haven’t done much to fix it, the report said.

One possible solution is for companies to examine and repair any workplace cultures that are off-putting to women and people of color. Most women, for instance, wouldn’t want to work at a company if they knew that it tolerates bigotry and unequal wages between the genders.

Another solution for improving workplace diversity is for companies to be more transparent, which signals to prospective employees their seriousness about the issue. This could include publishing employee compensation figures broken down by race and gender, releasing harassment and discrimination reports that reveal the number of such incidents, and ensuring that executive salaries “are tied to increases in hiring and retention of under-represented groups.”

It’s these types of public steps that could lead to more people of diverse backgrounds working on A.I., the report said, ensuring that the next big A.I. breakthrough benefits everyone.

In a related note, Joy Buolamwini, the founder of the Algorithmic Justice League and a graduate researcher at the MIT Media Lab who did not work on the report discussed here, has done remarkable work chronicling A.I. bias problems in facial recognition systems. That work earned her a spot on Fortune’s World’s Greatest Leaders list, published last week along side a number of other techies.

by BGF | Apr 29, 2019 | News

Speech analysis software could revolutionize how we diagnose mental disorders.

Listen Closely

For years, post traumatic stress disorder (PTSD) has been one of the most challenging disorders to diagnose. Traditional methods, like one-on-one clinical interviews, can be inaccurate due to the clinician’s subjectivity, or if the patient is holding back their symptoms.

Now, researchers at New York University say they’ve taken the guesswork out of diagnosing PTSD in veterans by using artificial intelligence to objectively detect PTSD by listening to the sound of someone’s voice. Their research, conducted alongside SRI International — the research institute responsible for bringing Siri to iPhones— was published Monday in the journal Depression and Anxiety.

According to The New York Times, SRI and NYU spent five years developing a voice analysis program that understands human speech, but also can detect PTSD signifiers and emotions. As theNYT reports, this is the same process that teaches automated customer service programs how to deal with angry callers: By listening for minor variables and auditory markers that would be imperceptible to the human ear, the researchers say the algorithm can diagnose PTSD with 89% accuracy.

Objective Diagnosis

Researchers interviewed and recorded 129 war-zone exposed veterans and gathered 40,000 speech samples to study. Then they used the audio to teach the AI which vocal changes correlated with diagnoses of PTSD — a slower, more monotonous cadence was an indicator of PTSD, as well as a shorter tonal range with less enunciation.

The AI, they say, can detect minute changes in the voice, like the tension of throat muscles and whether the tongue touches the lips — all potential indicators of a PTSD diagnosis.

“They were not the speech features we thought,” Charles Marmar, a psychiatry professor at NYU and one of the authors of the paper, told the NYT. “We thought the telling features would reflect agitated speech. In point of fact, when we saw the data, the features are flatter, more atonal speech. We were capturing the numbness that is so typical of PTSD patients.”

Although the AI is a breakthrough for VA clinicians, there are blindspots. By only inputting data from male combat veterans, the scope of the program’s potential is limited to men in the military — though it could be a proof of concept toward a more universal technology. As it’s refined, speech analysis could become an effective biomarker for objectively identifying the disorder, allowing clinicians to accurately diagnose veterans and give them the mental health support they need.