by Editor BGF | May 4, 2026 | Shinzo Abe Initiative for Peace and Security, News

Yashuide Nakayama’s Remarks

Member of the National Diet of Japan; Director, LDP Global South

May 1, 2026

It is a great honor for me to join you today at this remarkable conference, America at 250: A Beacon for the AI Age.

At this historic moment, we celebrate not only 250 years of America, but also a larger vision for the future of humanity in the Age of Artificial Intelligence.

I am especially honored to be here in two roles: as BGF Representative in Japan, and as Director of LDP Global South.

In both roles, I wish to express my deep appreciation for the vision and leadership of the Boston Global Forum.

The ideas advanced here are not ordinary ideas.

They are ideas for a new era.

They are ideas to help guide humanity through one of the greatest transformations in history.

Among them, I believe AIWS Trust Infrastructure is of profound importance.

In the AI Age, trust must not remain an abstract ideal.

It must become a real foundation for governance, cooperation, innovation, and peace.

It must be built into the systems we create, the institutions we lead, and the partnerships we form.

That is why I am very pleased to support the advancement of AIWS Trust Infrastructure in Japan and across the Global South. I believe this effort can help open a new path — a path where AI is guided not only by capability, but by responsibility; not only by speed, but by wisdom; not only by power, but by trust.

I also wish to express my deep respect for AIWS Lumina.

To me, AIWS Lumina is a beautiful and necessary vision.

It reminds us that the future cannot be shaped by technology alone.

The AI Age must also be guided by culture, by beauty, by reflection, and by the noble values that elevate the human spirit.

In this, I feel that Japan is deeply close to the standards of AIWS Lumina.

The spirit of harmony.

The respect for beauty.

The discipline of culture.

The value of reflection.

The connection between tradition and the future.

These are qualities deeply rooted in Japanese civilization.

As early as the 7th century, Prince Shotoku taught us:

“Harmony should be valued above all, and discord should be avoided.”

At the same time, he also reminded us:

“Every person has a heart, and each holds their own views.”

In other words, diversity of thought is natural — and true harmony does not come from uniformity, but from respecting differences and seeking balance.

And harmony, in its true sense, does not mean silence or compromise at any cost.

We must have the courage to recognize what is right, and to call out what is wrong.

This spirit — to harmonize differences, to respect each other, and to uphold fairness and integrity — resonates strongly with the ideals of Love, Creativity, and Nobility.For this reason, I believe Japan can and should become an important home for the annual activities of AIWS Lumina.

I would be very glad to support the organization of AIWS Lumina annual events in Japan, in close connection with Boston, helping to build a living bridge between Japan and the United States — and beyond that, a bridge of trust, culture, and shared human values for the world.

Let us work together to ensure that the AI Age will be not only more advanced, but also more trusted.

Not only more intelligent, but also more humane.

Not only more powerful, but also more noble.

Thank you very much.

Yasuhide Nakayama

by Editor BGF | May 4, 2026 | News, Shaping Futures

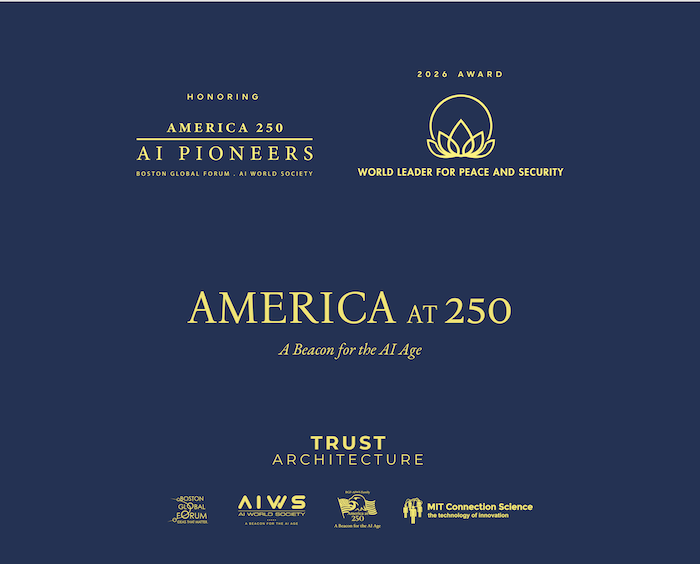

America 250: AI Pioneers Award

At the America at 250: A Beacon for the AI Age Conference on May 1, 2026, Professor Cynthia Dwork of Harvard University delivered her acceptance remarks for the America 250: AI Pioneers Award, on behalf of the distinguished honorees recognized by the Boston Global Forum and the AI World Society.

In her presentation, Professor Dwork offered a profound introduction to Differential Privacy—a foundational scientific framework for protecting individual privacy in data analysis and artificial intelligence. She explained that privacy is not merely about whether an individual is included in a dataset, but about ensuring that anything that can be learned from the data could still be learned even if that individual had opted out.

Her remarks highlighted that Differential Privacy not only safeguards individuals, but also strengthens scientific integrity, protects intellectual property, and provides a critical foundation for trustworthy AI. This work represents a cornerstone for the future of AI governance, Trust Infrastructure, and human-centered AI in the emerging era.

Professor Dwork also emphasized that society faces fundamental choices: whether to scale AI responsibly or irresponsibly. These decisions, she noted, belong to the domains of governance, regulation, and democracy—where governments have a responsibility to protect the public and guide technological progress toward the common good.

Download Professor Cynthia Dwork’s Acceptance Remarks (PPT): https://bostonglobalforum.org/mdi/wp-content/uploads/sites/15/Boston-Global-Forum-Dwork-v2.pptx

Her contribution stands as a powerful reminder that the future of AI must be built not only on innovation, but on trust, responsibility, and respect for human dignity.

by Editor BGF | May 4, 2026 | Papers & Reports, Publications

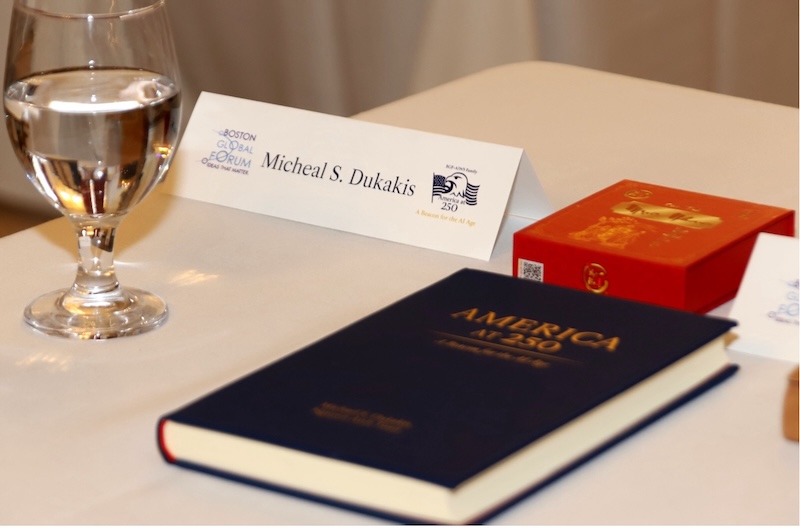

By Governor Michael S. Dukakis and Nguyen Anh Tuan

Explore a compelling vision for the future of AI grounded in trust, human dignity, and responsibility—praised by Governor Dukakis, who describes Nguyen Anh Tuan as “an exceptional thinker of the AI Age… with a profound vision for how technology can serve humanity.”

Download The book America at 250: A Beacon for the AI Age: https://bostonglobalforum.org/books/Americaat250-Book.pdf

by Editor BGF | May 4, 2026 | Global Alliance for Digital Governance

Today, May 1, 2026, at Harvard University’s Loeb House, on the occasion of America at 250: A Beacon for the AI Age, we stand not only to celebrate history, but to help shape the future.

We honor 250 years of America — 250 years of freedom, of creativity, of democratic aspiration, and of leadership that has helped inspire the modern world.

But today, we also look ahead. We look ahead to the Age of Artificial Intelligence.

And we affirm a truth of historic importance:

This new age cannot be guided by intelligence alone.

It cannot be guided by power alone.

It cannot be guided by innovation alone.

The AI Age must also be guided by trust.

By responsibility.

By human dignity.

By culture.

And by moral purpose.

For this reason, we declare today the advancement of two essential and complementary pillars for the future of humanity. The first is AIWS Trust Infrastructure — a practical architecture of trust for the AI Age, created to guide governance, institutions, business, and society through standards, accountability, trusted implementation, and human-centered values.

The second is AIWS Lumina — a global cultural architecture for the AI Age, grounded in Love, Creativity, and Nobility, created to illuminate the human spirit through culture, inspiration, beauty, and noble values in a time of profound technological transformation.

Together, these two pillars form a beacon for the AI Age. AIWS Trust Infrastructure builds the architecture of trust. AIWS Lumina illuminates the culture of humanity.

We further affirm that the Boston Global Forum holds the leading role in this effort — providing the strategic vision, the core standards, the guiding values, and the international direction for the advancement of both pillars.

All implementation partnerships and regional initiatives shall be developed in alignment with the strategy, standards, values, and leadership of BGF.

In this spirit, we welcome Vietnam Report as a committed implementation and support partner working with BGF to advance AIWS Trust Infrastructure and AIWS Lumina, especially in Vietnam, ASEAN, and in the activities leading toward the 10th anniversary of AIWS in 2027.

We welcome cooperation with LDP Global South, with the coordination of Yasuhide Nakayama, Diet Member and Director of LDP Global South.

We welcome Media Tenor as a cooperating partner in Europe.

To realize this Declaration, we establish today the Beacon Process: BGF will lead.

Partners will implement in alignment. And the road to AIWS 2027 will be a historic phase of realization. A phase to advance AIWS Trust Infrastructure. A phase to advance AIWS Lumina. A phase to help build an AI civilization that is not only intelligent, but also trustworthy, humane, creative, and noble.

This is our Beacon Declaration and Process for the AI Age.

by Editor BGF | Apr 26, 2026 | News

Boston Global Forum

Harvard University Loeb House

17 Quincy Street, Cambridge, Massachusetts

May 1, 2026 | 8:30 AM – 2:00 PM

Program Agenda

8:15 – 8:30 AM

Registration and Welcome Coffee

Celebrating the 250th Anniversary of the United States and Honor Presentations

8:30 – 8:40 AM

Opening Remarks Thomas E. Patterson

8:40 – 8:50 AM

Presentation of the Book America at 250: A Beacon for the AI Age

Introduction to AIWS Trust Infrastructure Nguyen Anh Tuan

Vision, framework, and implementation pathway for trust in the AI Age

8:50 – 9:10 AM

Presentation of the 2026 World Leader for Peace and Security Award Honoring the Leaders of the United States and the American People

Remarks by: Governor Michael S. Dukakis; Nguyen Anh Tuan; Thomas E. Patterson

Remarks delivered by Thomas E. Patterson on behalf of Governor Michael S. Dukakis, Nguyen Anh Tuan, and Thomas E. Patterson

Symbolic Representatives Receiving the Award: Jason Carter, representing the moral leadership legacy of America through President Jimmy Carter; Vint Cerf, representing the creativity, innovation, and civic spirit of the American people in the digital age

Special Remarks: Jason Carter — America as a Moral Beacon: Continuing the Carter Legacy of Peace, Human Rights, and Trusted Innovation in the Age of AI; Vint Cerf (by video) — Building Information Trust for the AI Age

9:10 – 9:30 AM

Recognition of America 250: AI Pioneers Presented by Nguyen Anh Tuan

Recipients in person: Cynthia Dwork; Alex Pentland; Regina Barzilay; Cynthia Breazeal; Herbert Lin

Acceptance Speech by Cynthia Dwork On behalf of the Honorees of America 250: AI Pioneers

From Differential Privacy to Trust Infrastructure: Building Trustworthy AI for Democracy

Main Panel

9:30 – 11:00 AM

AIWS Trust Infrastructure for the AI Age

Co-Moderators: Thomas E. Patterson and Alex Pentland

Alex Pentland – AI Pioneers — Building Human-Centered Trust Infrastructure for the AI Age

Herbert Lin – AI Pioneers — From Influence Operations to Information Trust: Building Resilience in the AI Age

Regina Barzilay – AI Pioneers — Trustworthy AI in Healthcare and High-Stakes Human Systems

Cynthia Breazeal – AI Pioneers — Human-Centered AI and Social Trust in the Age of Intelligent Systems

Daphne Koller – AI Pioneers (by video) — From Probabilistic AI to Trusted Information Ecosystems

Q&A

11:00 – 11:15 AM

Coffee Break

Beacon Declaration and Beacon Process

11:15 – 11:55 AM

Beacon Declaration and Beacon Process

Founding AIWS Trust Infrastructure and AIWS Lumina

Presented by Ramu Damodaran

Commitments to Accompany and Implement the Beacon Declaration

Yasuhide Nakayama (by video) — Member of the National Diet of Japan; Director, LDP Global South

Vu Dang Vinh (by video) — Vietnam Report

Roland Schatz — Media Tenor

Bui Ngoc Duc — Dat Xanh

Commitments to Accompany and Advance AIWS Lumina

Le Hoa (by video) — Cao Tra Muc Nhan

Special Video from Nha Trang in Tribute to America 250

Thu Pham

11:55 AM – 12:00 PM

Concluding Remarks — Nguyen Anh Tuan

12:30 – 2:00 PM

Luncheon Dialogue: Storytelling, Democracy, and America at 250

Hollywood, Civic Imagination, and the Future of Freedom in the AI Age

Harvard Faculty Club

Moderator: Gita Pullapilly

Panelists: Jason Carter; Don Lee

This special luncheon dialogue will explore how storytelling, film, and cultural leadership can sustain democratic values, deepen civic imagination, and strengthen public trust in an era shaped by artificial intelligence. The discussion will also explore the emerging concept and criteria of Films for Humanity in the Age of AI within the vision of AIWS Film Park, including the kinds of films and storytelling that should be encouraged in the AI Age: works that affirm human dignity, strengthen empathy, promote truth and responsibility, inspire reconciliation, and contribute to the cultural foundations of freedom and democratic life.

Concept Note

On the occasion of the 250th anniversary of the United States of America, the Boston Global Forum will convene the conference America at 250: A Beacon for the AI Age at Harvard University’s Loeb House on May 1, 2026. The conference honors the enduring ideals of America — liberty, democracy, innovation, peace, security, and service to humanity — while looking ahead to how America can continue to serve as a beacon of trusted, human-centered leadership in the Age of Artificial Intelligence.

Grounded in the spirit of the book America at 250: A Beacon for the AI Age, co-authored by Governor Michael S. Dukakis and Nguyen Anh Tuan, the conference brings together honor presentations, AI pioneer recognition, expert discussion, the Beacon Declaration and Beacon Process, and a luncheon dialogue on storytelling and democratic culture. It advances two important pillars for the AI Age: AIWS Trust Infrastructure and AIWS Lumina.

The conference has five main purposes:

- to celebrate America at 250 through the ideas and vision of the book;

- to present AIWS Trust Infrastructure as a practical framework for trust in the AI Age;

- to honor the leaders of the United States, the American people, and America 250: AI Pioneers;

- to launch a call to action and an implementation pathway through the Beacon Declaration and Beacon Process;

- and to highlight the role of storytelling, culture, and AIWS Lumina in sustaining democratic civilization in the AI Age.

A central feature of the conference is the presentation of the 2026 World Leader for Peace and Security Award, offered in honor of the leaders of the United States and the American people. The award will be received by Jason Carter and Vint Cerf as symbolic representatives. The conference will also recognize America 250: AI Pioneers, honoring distinguished pioneers whose work has helped shape the AI Age in service of humanity.

The main panel, AIWS Trust Infrastructure for the AI Age, co-moderated by Thomas E. Patterson and Alex Pentland, will feature leading honorees of America 250: AI Pioneers and explore trustworthy AI systems, trusted information, institutional resilience, healthcare, and human-centered design. The morning program will culminate in the Beacon Declaration and Beacon Process: Founding AIWS Trust Infrastructure and AIWS Lumina, followed by commitments from key partners to accompany and implement the vision.

A special luncheon dialogue on Storytelling, Democracy, and America at 250 will explore how film, narrative, and cultural leadership can strengthen freedom, dignity, empathy, and public trust in an era shaped by AI. In this way, the conference serves both as a tribute and as a call to action: to help shape a future in which trust, culture, and human responsibility remain at the center of the AI Age.