by Editor BGF | May 4, 2026 | News

World Leader for Peace and Security Award

America at 250: A Beacon for the AI Age

May 1, 2026, Harvard University Loeb House

Distinguished guests, honored leaders, dear friends, and members of the Boston Global Forum family,

Today, we—Governor Michael S. Dukakis, Nguyen Anh Tuan, and Professor Thomas E. Patterson, co-founders of the Boston Global Forum—have the profound honor of presenting the World Leader for Peace and Security Award to the Leaders of the United States and the American People.

We do so at a truly historic moment.

As America marks its 250th anniversary, we gather not only to celebrate the enduring journey of the United States, but also to honor a nation and a people whose contributions have helped shape the cause of liberty, democratic governance, peace, security, and human progress across generations.

For 250 years, the United States of America has stood as more than a nation. It has stood as a great and enduring idea: that human beings are born with dignity, that liberty is the right of all, that government must answer to the people, and that peace and security are strongest when rooted in justice, law, responsibility, and moral courage.

The American journey has never been simple. It has known hardship and hope, conflict and reconciliation, sacrifice and renewal. Yet again and again, the United States has shown an extraordinary capacity to rise, to renew itself, and to move forward with courage and purpose.

That is why this occasion carries such deep meaning.

Today, we honor the leaders of the United States, across generations, for bearing the heavy responsibilities of leadership in times of trial and transformation—for helping protect constitutional order, safeguard peace and security, and uphold principles that have inspired people far beyond America’s shores.

And we honor the American people, because the deepest strength of the United States has always lived in them: in their courage, their resilience, their creativity, their generosity, their civic spirit, and their determination to renew the promise of democracy for every generation.

America at 250 is not only the story of presidents, institutions, and historic documents. It is also the story of citizens and communities, of workers and teachers, of soldiers and scientists, of immigrants and dreamers, of families who built, defended, and renewed a nation that continues to inspire the world.

Today, this tribute has even greater significance because humanity is entering a new era—the AI Age.

In this age, peace and security must be understood more broadly and more deeply. They require not only national strength and strategic vision, but also the protection of human dignity, the integrity of information, the resilience of democratic institutions, and the responsible governance of artificial intelligence.

This is why America’s role remains so vital.

With its traditions of constitutional liberty, open inquiry, innovation, academic excellence, and democratic debate, the United States has a unique responsibility to help shape a future in which technology serves humanity, strengthens democracy, and advances civilization with wisdom and moral purpose.

At the Boston Global Forum, we have sought to contribute to that mission through initiatives such as AI World Society, The Social Contract for the AI Age, AIWS Government 24/7, and Trust Infrastructure for Democracy in the AI Age. These efforts are guided by a simple conviction: that in the AI Age, progress must be guided by ethics, freedom must be strengthened by trust, and intelligence must be elevated by humanity.

So today, as we honor the Leaders of the United States and the American People, we do so not only in recognition of the past, but also in hope for the future.

We honor America for what it has contributed to peace, security, liberty, democratic renewal, and the advancement of civilization.

And we look to America’s next chapter with confidence and hope—that it will continue to be a beacon of freedom, responsibility, innovation, trust, and humanity for the AI Age.

It is therefore with deep respect, admiration, and gratitude that we present the World Leader for Peace and Security Award to the Leaders of the United States and the American People.

May America continue to shine as a beacon for peace, security, liberty, and human dignity.

God bless America, and thank you.

by Editor BGF | May 4, 2026 | News

America at 250: A Beacon for the AI Age

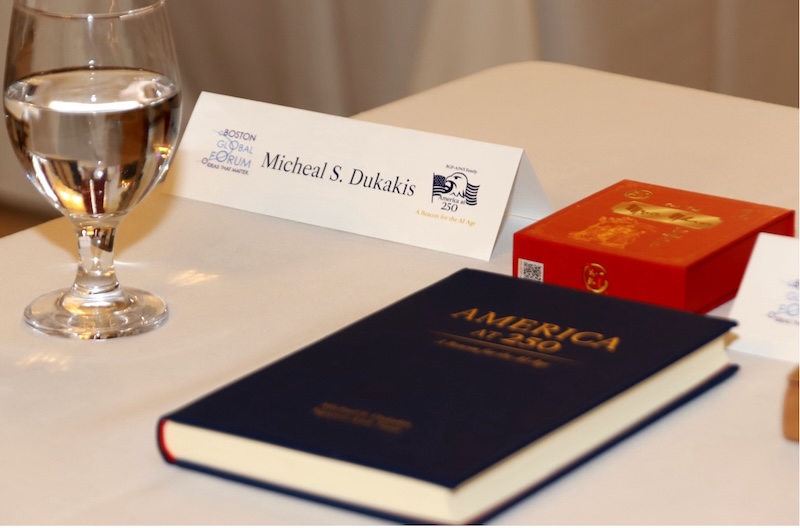

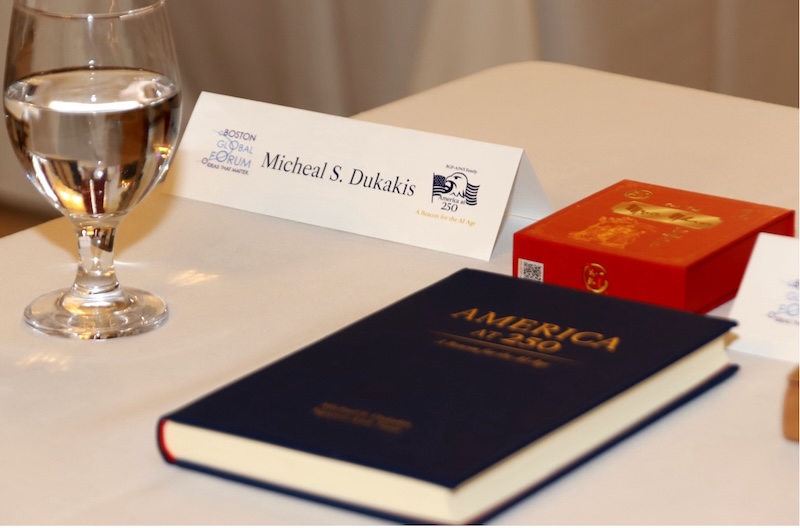

At the America at 250: A Beacon for the AI Age Conference on May 1, 2026, at Harvard University’s Loeb House, Nguyen Anh Tuan, Co-Founder, Co-Chair and CEO of the Boston Global Forum, presented the book America at 250: A Beacon for the AI Age, co-authored with Governor Michael S. Dukakis.

The presentation took place in the presence of Governor Dukakis, along with distinguished participants including those honored with the 2026 World Leader for Peace and Security Award and the America 250: AI Pioneers Award—leaders and innovators shaping the future of the AI Age.

In this book, Nguyen Anh Tuan and Governor Dukakis offer a forward-looking vision for the United States and the world, emphasizing the importance of trust, human dignity, ethical responsibility, and democratic values in guiding the development of artificial intelligence. The book introduces key concepts such as AIWS Trust Infrastructure, AIWS Trusted Order, and AIWS Government 24/7, outlining a comprehensive framework for a more trustworthy and human-centered AI future.

This book is not only a reflection on America’s 250th anniversary, but also a call to action—inviting leaders, thinkers, and citizens around the world to help build a future where AI serves humanity with trust, responsibility, and vision.

Download the Book

The soft copy of the book is available for download here: https://bostonglobalforum.org/books/Americaat250-Book.pdf

Complimentary Printed Copies

A limited number of 50 printed copies will be available as a gift.

If you would like to receive a complimentary copy, please send your request to:

[email protected]

by Editor BGF | May 4, 2026 | World Leader for Peace and Security, News, World Leaders in AIWS Award Updates

JASON CARTER

Chairman of the Board, The Carter Center

America at 250: A Beacon for the AI Age · Loeb House, Harvard University

Hosted by the Boston Global Forum and the AI World Society · May 1, 2026

Thank you very much. It is a great honor for me to be here today — to celebrate this moment in our country’s history, and to do so on behalf of my grandparents’ legacy and the people of the Carter Center, and the work we have done together.

Let me begin, of course, by saying thank you to Tuan, to Professor Patterson, to Governor Dukakis, and to the other remarkable leaders here who are going to define the next century of thought as we change, dramatically, the way we interact with each other and with the world.

I thought I would reflect for just a moment on my grandfather, Jimmy Carter, who lived for one hundred of these 250 years of American history. The remarkable story of his life — and what he did with technology — is, I think, indicative of how the American people, and the people of the world, will confront this next era.

…….

He was born, frankly, into a technological environment that wasn’t that different from the first 150 years of American history. Professor Pentland can describe these in much more detail than I can, in his book. But my grandfather was born in rural Georgia, in a 600-person village essentially no running water, no electricity, people still living off the land. He plowed fields behind a mule.

Over the course of his hundred years, he observed each step. Rural electrification. Indoor plumbing being installed in his own house — they took down the bucket that had served as a shower and put in actual indoor plumbing. He went on to the Naval Academy and observed the birth of nuclear power, participated in those first engines that ran our submarines. And he did something my children will almost certainly never do: he crossed the ocean in a ship.

Think about the way he watched these things transform — and the way he kept his values, the values he understood to be America’s values first. That national mission statement, now 250 years old: the idea that each person is endowed by their Creator with these inalienable rights. That idea of America carried him through all of those technological changes.· ·

On his 99th birthday, we made a social media birthday card for him — a digital mosaic. I went down to Plains, and I said,

“Papa, this mosaic was created from all of the well-wishes you received from around the world.

” And he said,

“Well — from whom?”

I said,

“Papa, there were tens of thousands of them. They were from over a hundred countries.”

This was 99 years into his life. I had been worried that he wasn’t still having experiences that felt meaningful to him. He looked up at me and said,

“A hundred countries?” I said,

“Yes.” And he teared up.

Think about this person — from a 600-person village — who had seen this world transformed, and had maintained his relationship with that world in a way that was true to him, and true to those values we are talking about today. To be able to experience that, in that moment, was remarkable.

That 99th birthday was about eight months after ChatGPT first launched. So my grandfather lived, literally, from the dawn of electricity in his community to the dawn of AI in our communities.

What he chose to do with the last fifty years of his life — and the reason I feel good about being here and accepting this award — is because the work they did at the Carter Center, and that we continue to do, I think, is helpful here. Let me say two things about AI at the Carter Center.

Some of you may know that the Carter Center has democracy programs and health programs, peace programs and health programs, across the world. We have 3,500 employees who have worked, essentially, beyond the end of the road. Our health programs have addressed neglected tropical diseases in South Sudan. In Chad, we have nearly 1,200 employees — we are the largest employer in Chad. This is a group that knows where Chad is, but most people I talk to do not. It is in sub-Saharan Africa, in the Sahel.The point is that we have done work in places that my grandparents recognized. When they walked, for example, into a 600-person village in Mali and saw guinea worm disease — a disease they knew was preventable and eradicable, but only through the work of people in 600-person villages beyond the end of the road — they recognized that community. They recognized it because that’s where they were from. These are not just places to send pity. They are places where we can recognize their power.

The big difference today, in those communities where the Carter Center has worked, is this. We have eradicated diseases. We have fought a variety of health problems alongside, for example, 35,000 community health workers in Uganda. In Ethiopia, every year, we treat 12 million people in a single week through mass drug administrations for trachoma in the northwest of the country — something you could not do in Massachusetts, or in Georgia where I live. Dr. Rochelle Walensky, our board member who used to run the CDC, can attest to some of that.

……

But what you can do today that is different is this. Every single person born today in those communities — a 600-person village in Mali — is going to skip so much of the technological change that my grandfather witnessed in his lifetime.

When I was in the Peace Corps in South Africa, I had never had a cell phone before. I stood there and realized that, while people were carrying their water from the river and building their houses out of sticks and mud in the place I lived, there would never be a phone line. And now, when we go to the places where we work, we use AI daily in our health programs.

It has transformed the way we detect trachoma — which is a rare disease, and as it becomes rarer, it grows more difficult to detect. But because people have cell phones in northwest Ethiopia, we have the ability to take a picture and use AI to determine, at a remote location, whether or not we have identified trachoma. It transforms our epidemiological work. It transforms a host of other things we do.

And this technology will now be delivered differently. No longer will people who live beyond the end of the road have to sit and wait to acquire this knowledge — or be cut out from it. So long as they have a sufficient data pipe, and a way to interact with it, they will be able to engage with vast stores of human knowledge almost immediately. The transformation in these communities is going to be quick.

It is going to be fast. It is going to be a remarkable explosion of human creativity and access to knowledge — in places we sometimes forget about. But these are the places where the Carter Center works. We must make sure there is trust. We must make sure AI remains human-centered. We must, as my grandfather did, adjust to changing technologies without losing track of our values. This is crucial.

……..

I will conclude with one use of AI as, I hope, a beacon. The Carter Center, in its conflict resolution work, collected — from social media reports of bombings in Syria, on a real-time basis — a vast amount of information. During the Syrian conflict, the Carter Center became the most trusted mapping source for where conflict was occurring. We did that by keeping the same village-centered approach: listening to what was happening on social media, vetting those reports, and then displaying on a map exactly where we understood conflict to be occurring.

What that has allowed us to do now — particularly with the help of AI, which lets us go back in time and crawl through this vast body of data — is that we now have the greatest source of information about where to look and deal with unexploded ordnance. We believe there are some 300,000 pieces of unexploded ordnance in Syria. As that country comes back together in this post-conflict moment, everyone can agree that this is a project they can do together. No matter what side of the war they were on, no matter their political beliefs, they understand there is a human project in that community: to tackle the issue of unexploded ordnance, because it is a threat to children, a threat to every bit of community life, a threat to business — a threat to everything.

Because we have trusted data, we are able to say: we can play a role with you, providing the tools you need to bring people together in peace, to begin sewing this community back together.

It is something we recognize — and the goals of this organization are real. As we talk about the

American people and the idea of America, I just want to make sure that yes, we celebrate my grandparents and the remarkable work of the Carter Center, but also that we recognize the power we have, in the nonprofit world and in other places, to bring people together with this new power — as long as we do it in a way that makes good human sense.

……..

We at the Carter Center are honored to receive this award. We thank everyone here so much. And I accept it on behalf of the American people — with all the power vested in me by no one — and on behalf of the Boston Global Forum. Thank you very, very much. We are deeply honored.

JASON CARTER

Chairman of the Board, The Carter Center

by Editor BGF | May 4, 2026 | News

Reimagining storytelling as a force for trust, human connection, and the elevation of civilization in the Age of Artificial Intelligence.

———-

At a pivotal moment in history — when artificial intelligence is rapidly transforming how stories are created, distributed, and experienced — the question is no longer only how technology will shape film, but how film will shape humanity. On May 1, 2026, at the Harvard Faculty Club, the Boston Global Forum convened a distinguished dialogue with Hollywood leaders to explore a defining challenge of our time: how to ensure that storytelling in the AI Age remains deeply human, trustworthy, and capable of elevating civilization.

The luncheon dialogue was part of the conference America at 250: A Beacon for the AI Age and featured filmmaker Gita Pullapilly, actor and producer Don Lee, and Jason Carter, Chair of The Carter Center Board of Trustees.

The discussion centered on a fundamental question: in the AI Age — when technology can generate images, edit films, write scripts, and transform production — how can humanity ensure that human beings remain at the center of storytelling, preserving emotion, trust, identity, and human dignity?

Emotional Storytelling and Human Trust

Gita Pullapilly emphasized the irreplaceable role of emotional storytelling. She argued that storytelling creates human connection, breaks barriers, and builds trust through emotional safety. She warned that if younger generations outsource their storytelling to AI, society risks losing the ability for reflection, self-understanding, and authentic human connection.

She also highlighted a structural challenge within today’s media landscape: algorithm-driven platforms often prioritize engagement over meaning, making it difficult to produce films that create deep human impact. In this context, she pointed to the importance of new models of filmmaking aligned with human values.

AIWS Film Park and a New Model for Film for Humanity

AIWS Film Park emerged as a key idea in this direction. Gita Pullapilly connected her perspective to Nguyen Anh Tuan’s vision of an AIWS Film Park in Vietnam — a creative space where AI is used not to replace human storytellers, but to support them in producing films for the good of humanity, with global reach and purpose.

This vision opens the possibility of a new creative paradigm: Hollywood, Boston, Vietnam, and global partners working together to build films that do more than entertain — films that inspire, heal, connect, and elevate humanity.

AI, Safety, and the Human Craft of Filmmaking

Don Lee shared insights from Hollywood and the action and stunt world. AI is transforming production — enhancing safety, optimizing workflows, and assisting in editing and shot design. However, it also risks diminishing human skills and reducing the number of creative professionals. He stressed the importance of safeguards to ensure that AI does not override human judgment or logic.

Jason Carter reflected on generational change and identity. Younger generations still tell stories — but differently. The key challenge is ensuring that they remain at the center of their own narratives. If storytelling and reflection are outsourced to AI, a fundamental question arises: who are we?

A Lively Dialogue with MIT, Harvard, and the Boston Intellectual Community

The luncheon dialogue was lively, dynamic, and intellectually rich, with insightful contributions from scholars and participants from MIT, Harvard, and the broader Boston intellectual community. Their perspectives extended the conversation beyond Hollywood, linking film and storytelling to education, journalism, intellectual property, democracy, human identity, and the future of human creativity in the AI Age.

The dialogue also addressed broader systemic challenges: the rise of algorithm-driven content that can numb audiences; the potential contraction of the creative workforce; the erosion of traditional copyright frameworks; and the need for trusted filters to distinguish meaningful storytelling from overwhelming AI-generated content.

A key insight was that the most meaningful stories are often untold stories — stories that cannot be predicted or generated by algorithms, but must be discovered and created through human experience, creativity, courage, and moral imagination.

Concluding Remarks by Nguyen Anh Tuan

In his concluding remarks, Nguyen Anh Tuan, Co-Founder, Co-Chair and CEO of the Boston Global Forum, expressed deep appreciation to Gita Pullapilly, Don Lee, Jason Carter, and all participants.

He emphasized that the discussion marks the beginning of a new direction: developing standards for “Film for Humanity in the AI Age.” While the speakers highlighted challenges and risks, Nguyen Anh Tuan stressed that the mission of the Boston Global Forum is not to focus on challenges alone, but to advance solutions through collaboration.

“Boston Global Forum does not focus only on challenges. We focus on solutions. We will collaborate to find solutions.”

He affirmed that BGF will work with artists, educators, technologists, Harvard, MIT, the Boston intellectual community, and Hollywood to address the impact of AI on culture, film, and music.

Nguyen Anh Tuan further highlighted that film is a central pillar within AIWS, with the power to elevate humanity, inspire reflection, and create meaningful change. He connected this vision to the development of AIWS Film Park and upcoming initiatives on Film for Humanity in the AI Age, where AI will serve as an assistant and agent — supporting human creativity rather than replacing it.

He emphasized that Boston, as a global intellectual capital, together with Hollywood and international partners, can lead this transformation. Through collaboration, he expressed confidence that this effort can achieve a historic breakthrough for film in the AI Age.

Trust in Film: A Standard for the AI Age

What emerged from this dialogue is not only a reflection on Hollywood in the AI Age, but the beginning of a new standard: Trust in Film.

In an era overwhelmed by synthetic content, algorithmic amplification, and AI-generated noise, the future of storytelling will not be defined by volume — but by trustworthiness. Film must become a trusted medium again: trusted in its origin, its intention, its emotional truth, and its impact on society.

This is the foundation of Film for Humanity in the AI Age — where storytelling is guided not only by creativity, but by responsibility, authenticity, and human dignity.

At the same time, this vision calls for something deeper. It calls for AIWS Lumina — a Global Cultural Architecture for the AI Age — to restore meaning, connection, and the human spirit within storytelling. If Trust Infrastructure defines what we can rely on, Lumina defines what we can believe in.

Together, they establish a new paradigm: not only films that are intelligent, but films that are trusted, meaningful, and elevating.

This is not just the future of film. It is the future of civilization in the AI Age.

by Editor BGF | May 4, 2026 | Shinzo Abe Initiative for Peace and Security, News

Yashuide Nakayama’s Remarks

Member of the National Diet of Japan; Director, LDP Global South

May 1, 2026

It is a great honor for me to join you today at this remarkable conference, America at 250: A Beacon for the AI Age.

At this historic moment, we celebrate not only 250 years of America, but also a larger vision for the future of humanity in the Age of Artificial Intelligence.

I am especially honored to be here in two roles: as BGF Representative in Japan, and as Director of LDP Global South.

In both roles, I wish to express my deep appreciation for the vision and leadership of the Boston Global Forum.

The ideas advanced here are not ordinary ideas.

They are ideas for a new era.

They are ideas to help guide humanity through one of the greatest transformations in history.

Among them, I believe AIWS Trust Infrastructure is of profound importance.

In the AI Age, trust must not remain an abstract ideal.

It must become a real foundation for governance, cooperation, innovation, and peace.

It must be built into the systems we create, the institutions we lead, and the partnerships we form.

That is why I am very pleased to support the advancement of AIWS Trust Infrastructure in Japan and across the Global South. I believe this effort can help open a new path — a path where AI is guided not only by capability, but by responsibility; not only by speed, but by wisdom; not only by power, but by trust.

I also wish to express my deep respect for AIWS Lumina.

To me, AIWS Lumina is a beautiful and necessary vision.

It reminds us that the future cannot be shaped by technology alone.

The AI Age must also be guided by culture, by beauty, by reflection, and by the noble values that elevate the human spirit.

In this, I feel that Japan is deeply close to the standards of AIWS Lumina.

The spirit of harmony.

The respect for beauty.

The discipline of culture.

The value of reflection.

The connection between tradition and the future.

These are qualities deeply rooted in Japanese civilization.

As early as the 7th century, Prince Shotoku taught us:

“Harmony should be valued above all, and discord should be avoided.”

At the same time, he also reminded us:

“Every person has a heart, and each holds their own views.”

In other words, diversity of thought is natural — and true harmony does not come from uniformity, but from respecting differences and seeking balance.

And harmony, in its true sense, does not mean silence or compromise at any cost.

We must have the courage to recognize what is right, and to call out what is wrong.

This spirit — to harmonize differences, to respect each other, and to uphold fairness and integrity — resonates strongly with the ideals of Love, Creativity, and Nobility.For this reason, I believe Japan can and should become an important home for the annual activities of AIWS Lumina.

I would be very glad to support the organization of AIWS Lumina annual events in Japan, in close connection with Boston, helping to build a living bridge between Japan and the United States — and beyond that, a bridge of trust, culture, and shared human values for the world.

Let us work together to ensure that the AI Age will be not only more advanced, but also more trusted.

Not only more intelligent, but also more humane.

Not only more powerful, but also more noble.

Thank you very much.

Yasuhide Nakayama