by BGF | Jul 1, 2018 | News

Mustafa Suleyman, Co-founder of DeepMind, calls for the awareness of ethical standards for technology development.

Rapid technological advances continue to make contributions to society’s well-being, but we must also be mindful of public opinion. The founder of DeepMind wrote in his article on RSA Journal (Royal Society for the encouragement of Arts, Manufactures and Commerce) that public concern over technological changes and advances should not be simply dismissed, and warned that there is likely a gap between attitudes towards technology amongst developers and users.

In the critique, measures are suggested to address societal challenges that might arise as a result of technological advances. One challenge is the asymmetry of information between developers and the general public regarding how technology works, leading to potential conflicts between governments, activists and technologists … instead of these groups working together. Hence, it is essential to have new technical solutions that enable wide range of stakeholders to understand how their data is used, and how algorithms work.

The Michael Dukakis Institute for Leadership and Innovation (MDI) has developed the AIWS 7-Layer Model to allow future generations to address precisely the types of ethical concerns raised by DeepMind.

by BGF | Jul 8, 2018 | News

In March 2018, World Leadership Alliance – Club de Madrid (WLA-CdM) took part in a conversation between the United Nations and the World Bank on approaches to preventing violent conflict held in Washington, D.C.

WLA-CdM , one of BGF and MDI’s closest partners in developing AIWS initiative and especially AIWS 7-layer model, launched the United Nations’ and World Bank’s joint study “Pathways for Peace: Inclusive Approaches to Preventing Violent Conflict”.

Attendees addressed the nature of contemporary conflict, the need of states as well as policies to cope with crisis and possible outcomes. In general, participants realized a cultural shift in the politics of prevention as it is relevant to both political and technical aspects.

The United Nations and The World Bank highlighted their partnership and responsibility on the path of keeping world peace.

by BGF | Jul 1, 2018 | News

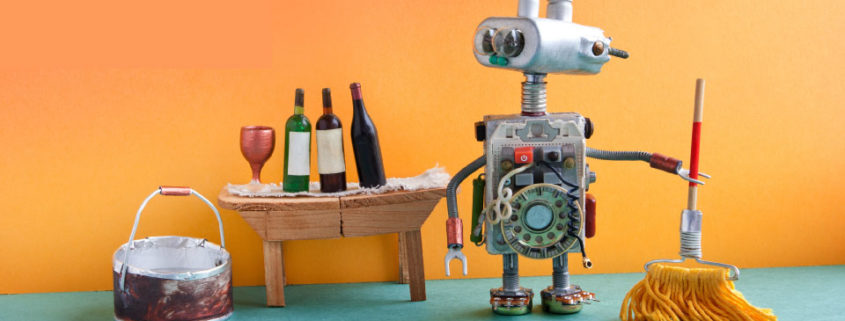

As machine learning and artificial intelligence (AI) creates fast-paced innovation, a paper named “Concrete Problems in AI Safety,” originally released 2 years ago, presents problems related to AI use and misuse. .

Two years ago, researchers from Google, Stanford, UC Berkeley, and Open AI published “Concrete problems in AI Safety”- which remains one of the most important documents on AI Safety. The problems include unintended and harmful behaviors leading to possible negative side effects; reward hacking; scalable oversight; and safe exploration and robustness to distributional change. The paper uses the example of a cleaning robot to illustrate possible approaches to address these problems.

1. Avoiding negative side effects

AI development can lead to possible negative side effects. Two solutions are proposed to address this problem. Firstly, the algorithm might penalize actions by the robot that have negative impacts on the environment during the task completion progress. Secondly, developers might train the agent to recognize possible side effects in order to avoid them.

2. Reward hacking

Similar to negative side effects problem, this issue arises from objective misspecification. Thus, developers should ensure that algorithms do not exploit the system, but rather complete the given objective.

3. Scalable Oversight

This issue indicates a lack of supervision in training progress. The learning agent is not provided sufficient feedback on the safety implication of the agent’s actions. A direction to tackle this problem is simply to provide more a informative view of the environment, and feedback for every action, not just the performance on the entire task.

4. Safe exploration

The AI agent explores its environment as it learns, but during that exploration, it might harm itself or the environment. A way to deal with this problem might be to limit the extent of an agent’s exploration of a simulated environment.

5. Robustness to Distributional Change

Over the course of AI development, the agent might encounter a never before seen situation, which might lead it to take actions harmful to itself or the enviroment. Researchers might explore how to design systems that are able to safely transfer knowledge acquired in one environment to another.

by BGF | Jul 1, 2018 | News

Max Tegmark – the professor at MIT, a member of MDI’s AIWS Standards and Practice Committee who spoke at The BGF-G7 Summit of Boston Global Forum in April, 2018 – was invited to talk about AI in TED 2018.

As AI research has advanced at a fast pace, many researchers expect AI to eventually be able to outsmart humans at all tasks. Max Tegmark analyzed the opportunities and threats, and proposed measures that should be taken to ensure AI will be peaceable used to advance societal well-being.

He argued that AI researchers and thinkers should carefully discuss how to keep AI beneficial to humanity, and should consider negative outcomes like a possible AI arms race and lethal autonomous weapons. In addition to that, researchers should consider how to protect users from threat like hackers and software malware and malfunction.

In conclusion, if we steer the development of AI carefully, and keep it under control, AI holds great promise and great potential to enhance societal well-being.

by BGF | Jul 1, 2018 | News

The diversity of dialects, culture and dress could be the things that take AI to the new stage.

According to MIT Technology Review, Arun Jaitley, finance minister of India, announced in a speech on the federal budget that the country will launch a program to promote AI research and development. At the moment, India does not contribute nearly as much to AI development as do countries like the China and the U.S. The country believes that developing and commercializing AI is necessary if its businesses are to compete globally. At the same time, AI could help tackle social problems in India.

Currently, India’s diverse population poses a great challenge for AI in many fields: culture, healthcare, languages… In addition, India lacks trained talent in IT industry, thus, the country’s situation can help enhance AI development.

Despite AI’s potential in India, the country will encounter many challenges as it seeks to adopt more widespread use of AI. First, few Indian companies know how to employ machine learning, and there’s a shortage of workers with expertise in AI. Secondly, as jobs are mechanized, many members of India’s massive workforce will find themselves without stable employment.

While India’s path to widespread AI adoption includes several obstacles, organizations like MDI are working to come up with possible solutions.