by BGF | Dec 24, 2018 | News

Cameron Hickey, Technology Manager at the Information Disorder Lab (IDLab) from the Shorenstein Center, held a talk on Fake News during the Global Cybersecurity Day 2018 at Loeb House, Harvard.

Cameron Hickey is the Technology Manager at the Information Disorder Lab (IDLab) at the Shorenstein Center. He is an Emmy Award-winning journalist who has reported on science and technology for the PBS NewsHour and NOVA, and covered political, social, and economic issues for many outlets, including The New York Times, American Experience, Al Jazeera, and Bill Moyers.

The presentation of Cameroon Hickey at the Global Cybersecurity Day 2018 Symposium on fake news also received much of the audience’s attention. His report brought people conscience about the definition and classification of information disorder and its challenging impact to our society.

After 10 years working in the PBS Newshour reporting on technology and in the last two years, he shifted his focus to disinformation on social media. During these years, he found three types of this phenomenon occurred divided by its level, they are dis-information, mis-information, mal-information, rather than just the common term: “fake news”

He also explained and categorized information disorder into seven forms:

- Satire or Parody

- Misleading Content

- Imposter Content

- Fabricated Content

- False Connection

- False Context

- Manipulated Content

In addition, there are a number of junk pages on the internet which contain hate speech, plagiarism, fake, clickbait, misleading content…

In order to deal with these domains, one of the key solutions is to track and identify the source of this false information. There are also common characteristics can be found on the junk websites, these characteristics are listed by Mr. Hickey after 6 months of intensive content monitoring:

- Coordination comes in many flavors – bots vs real people

- Information disorder is rarely obvious – nuanced content is equally pervasive and problematic

- Hate and Bigotry driving the conversation – islamophobia, sexism, anti-immigrant…

- The Network remains reactive

This issue is extremely challenging since many do not know to differ these false content with fact. Furthermore, they are convinced by the advertising systems run by other parties political who want votes or enterprises who want to generate revenue on social platform like Facebook and Google without knowing the news suggested for them has been manipulated.

Watch full speech of Cameron Hickey at the Global Cybersecurity Day 2018

by BGF | Dec 24, 2018 | News

On December 12, 2018, the Global Cybersecurity Day 2018 took place at Loeb House, Harvard University, MA, organized by the Boston Global Forum (BGF) and the Michael Dukakis Institute (MDI). One of the most important parts of the event was the introduction of AIWS Ethics and Practice Index delivered by Dr. Thomas Creely, Member of the AIWS Standards and Practice Committee.

Dr. Thomas Creely is a member of The AIWS Standards and Practice Committee. He serves as an Associate Professor of Ethics, Director of Ethics & Emerging Military Technology Graduate Program.

On behalf of the authors group, Dr. Thomas Creely presented the AIWS Report about AI Ethics and published the Government AIWS Ethics Index. This index measures the extent to which a government in its Artificial Intelligence (AI) activities respects human values and contributes to the constructive use of AI in such unprecedented pace of development. The report has been conducted in effort to reach a common accord of respect for the norms, laws, and conventions in the AI world in a diversity approaches and frameworks in countries.

There are 4 main categories in the Index:

- Transparency: Substantially promotes and applies openness and transparency in the use and development of AI, including data sets, algorithms, intended impacts, goals, and purposes.

- Regulation: Has laws and regulations that require government agencies to use AI responsibly; that are aimed at requiring private parties to use AI humanely and that restricts their ability to engage in harmful AI practices; and that prohibit the use of AI by government to disadvantage political opponents.

- Promotion: Invests substantially in AI initiatives that promote shared human values; refrains from investing in harmful uses of AI (e.g., autonomous weapons, propaganda creation and dissemination).

- Implementation: How governments seriously execute their regulations, law in AI toward good things. Respects and commits to widely accepted principles, rules of international law.

Further discussion took place after Dr. Creely’s presentation, there are questions on the future of AI. Though it is difficulty to anticipate how AI will change humanity, it’s believed that we have great scientists and scholars who are continuously working and preparing us for whatever is coming.

Watch full speech of Dr. Thomas Creely at the Global Cybersecurity Day 2018

by BGF | Dec 24, 2018 | News

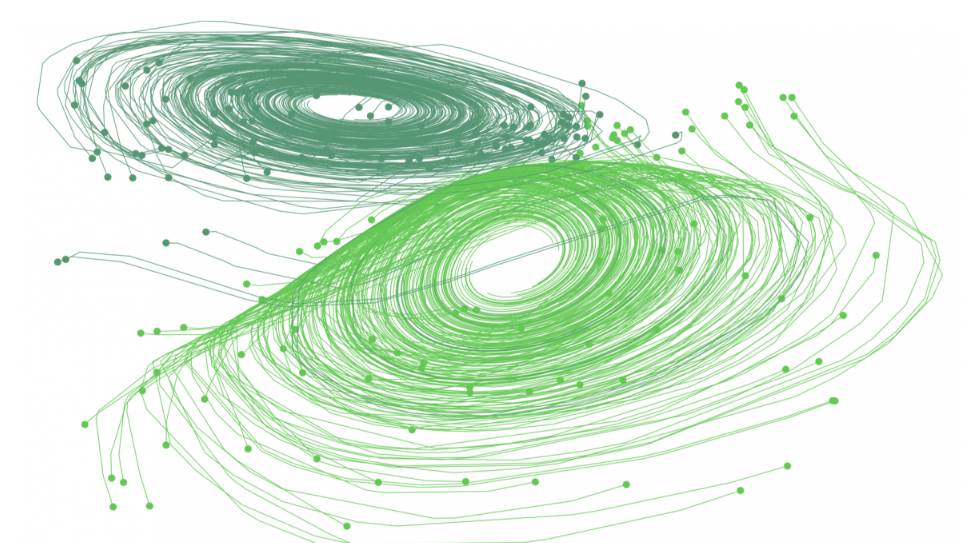

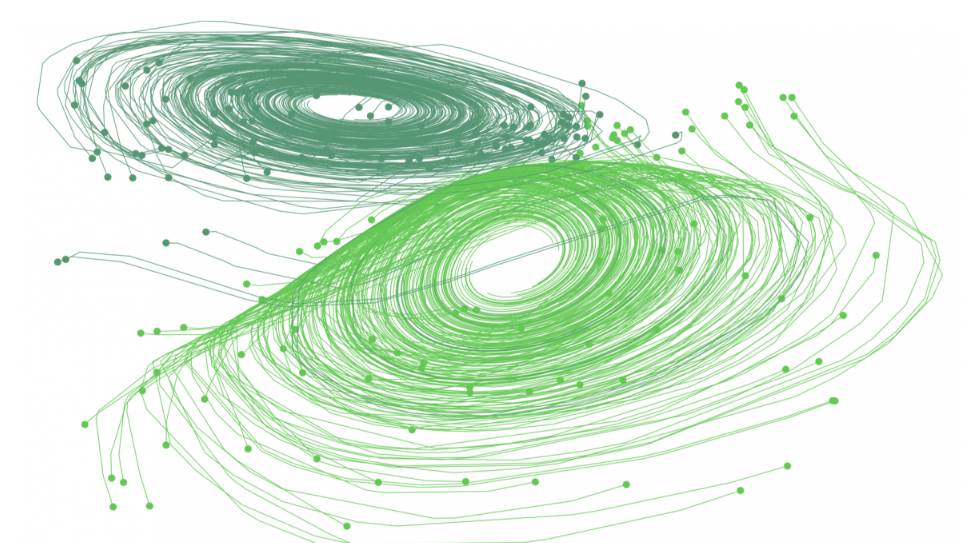

David Duvenaud – an AI researcher in the University of Toronto and his collaborators at the university and the Vector Institute design a brand-new prototype neural network that can overcome its previous models.

At first, his idea was to create a deep-learning algorithm that could predict a person’s health over time. However, the data given by medical’s record is a bit complicated since each check-up gives different record with different reasons and measurements. Conventional machine-learning method finds it struggling to model continuous processes, especially those are not measured often. Because this method finds the patterns in data by stacking layers of simple computational nodes, the discrete layers keep it from providing the exact outcome. To be more specific, traditional machine-learning model follow its common process, known as supervised learning, which means collecting a lot of data’s layer to figure out a formula to solve other issues with similar traces. For instance, it mistakes a cat for a dog due to the fact that they both have floppy ears. However, there are various types of dog and cat with diverse features. Hence, it might produce inaccurate results.

In response to the difficulty, they allowed the network find formulas match the description of each stage of the process, each stage represents a layer of data. Taking the example of differentiating the two pets above, the first stage might take in all the pixels and use a formula to find out which ones are most similar for cats versus dogs. A second stage might use another to construct larger patterns from groups of data to tell whether the picture has whiskers or ears. Each subsequent stage would identify a feature of the animal, after collecting a sufficient data of layers, it will identify the animal’s picture. This step-by-step breakdown of the process allows a neural net to build more sophisticated models in order to produce a more accurate prediction.

Yet, in terms of the medical field, it will require us to classify health records over time into discrete steps for instance period of years or months. So, the only way to model these medical records is to specify it even more, it might encounter the same problems as the traditional model does. To make actual breakthroughs, they still need to dig deeper into the method with more experiments and research.

“The paper will likely spur a whole range of follow-up work, particularly in time-series models, which are foundational in AI applications such as health care,” said Richard Zemel, the research director at the Vector Institute.

No matter how far the algorithm has been advanced, there is still further risk that the rate at which AI advances will outpace the continuing development of ethical and regulatory frameworks. Layer 4 of the AIWS 7-Layer Model developed by MDI focuses on policies, laws and legislation, nationally and internationally, that govern the creation and use of AI and which are necessary to ensure that AI is never used for malicious purposes.

by BGF | Dec 24, 2018 | News

On December 16, 2018, Nguyen Anh Tuan, Director of the Michael Dukakis Institute for Leadership and Innovation (MDI), Co-founder and Chief Executive Officer of the Boston Global Forum (BGF) discussed with leaders and scholars from Dalat University (Vietnam) to establish the AI World Society House and AI World Society Innovation Program at Dalat University.

On November 22, 2017, the Artificial Intelligence World Society (AIWS) was established by the Michael Dukakis Institute for Leadership and Innovation (MDI) with the goal to advance the peaceful development of AI to improve the quality of life for all humanity. Ever since, MDI has constantly working to fulfill the mission of the AIWS.

Recently, the Michael Dukakis Institute for Leadership and Innovation (MDI) has announced its partnership with Dalat University (DLU) to build the AIWS House and design the AIWS Innovation Program at DLU, as a “nucleus” for DLU to become a pioneer in research, teaching and application of AI in Vietnam. In this collaborative support mechanism, DLU will operate and manage the activities of the AIWS House and MDI will advise and supervise to ensure quality, efficiency and achievement of goals. Nguyen Anh Tuan, Director of MDI represented MDI to work with DLU’s leaders and will be in charge of this project.

Leaders from Dalat University expressed their gratitude to the assistance of MDI. They hoped that the establishment and of the AIWS House and the AIWS Innovation Program at Dalat University will attract AI-leading professors and scientists to teach and share knowledge for lecturers as well as students in the university in particular; develop the AI application programs and initiatives for socio-economic development in Vietnam in general.

by BGF | Dec 24, 2018 | News

The European Commission (EC) published their first document on technology guidelines and policy and is looking for people’s feedback.

The draft composed by a group of 52 experts from academia, business, and civil society, serves as a guideline for AI developers to follow.

“AI can bring major benefits to our societies, from helping diagnose and cure cancers to reducing energy consumption. But, for people to accept and use AI-based systems, they need to trust them, know that their privacy is respected, that decisions are not biased.” said EC vice-president and commissioner for the Digital Single Market, Andrus Ansip.

There are two key elements in the guideline to create trustworthy AI: one is to respect rights and regulation, ensuring an ‘ethical purpose’; the other is the robustness and reliability of the AI. The 37-page document contains issues of bias and the importance of human values. It points out the potential benefits as well as threats AI brings about.

The draft guidelines are open for comment for one month until 18 January 2019 and will finally be presented in March.

Developing rules and orders for AI are also the aim that the AIWS is working on. The AIWS has continuously build the AIWS 7-Layer Model, a set of ethical standards for AI to guarantee that this technology is safe, humanistic, and beneficial to society.