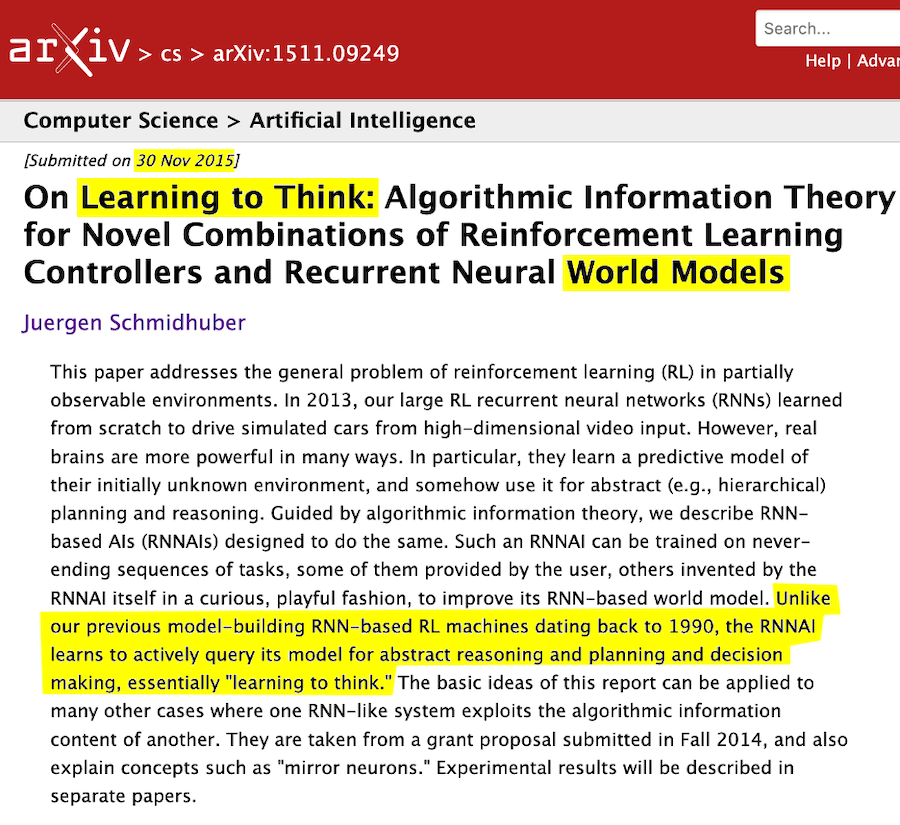

Ten years ago, Jürgen Schmidhuber introduced the concept of the reinforcement learning (RL) prompt engineer, an adaptive mechanism through which an RL controller learns to actively query a neural world model to support abstract reasoning and decision-making. This early vision anticipated today’s chain-of-thought and deliberative reasoning architectures, emphasizing systems that improve their own cognitive processes through learning.

Schmidhuber’s 2015 formulation builds upon two earlier milestones:

- 1990 Neural World Model

A predictive neural architecture capable of millisecond-level planning, allowing agents to simulate and anticipate environmental dynamics. - 1991 Adaptive Neural Subgoal Generator

A pioneering framework for hierarchical planning, enabling self-generated intermediate goals and multi-level decision structures.

Together, these systems represent a progression from low-level predictive modeling → structured subgoal formation → integrated RL-driven reasoning.

This continuum demonstrates a long-standing pursuit: creating AI that does not merely respond, but learns how to think—developing internal queries, refining its strategies, and coordinating understanding through dynamic world models.

Schmidhuber’s contributions remain foundational to modern adaptive reasoning, world-model-based AI, large-sequence models, and the emerging generation of self-improving autonomous systems.

AIWS recognizes the importance of research that advances:

- transparent and interpretable reasoning

- adaptive, self-reflective AI

- architectures grounded in humanistic, responsible intelligence

The evolution traced here aligns closely with the AIWS mission to develop AI that supports human dignity, creativity, and enlightened governance.